Artificial intelligence (AI) applications in Industrial Internet of Things (IIoT) and edge computing provide advantages for real-time decisions and smarter actions in the field. See advice on edge computer selection and tools for building Artificial Intelligence of Things (AIoT) applications.

Learning Objectives

- Artificial intelligence (AI) applications for Industrial Internet of Things (AIoT) support smarter industrial decisions.

- Select the right edge computer to support AI and machine learning (ML).

- See tools for building AIoT applications.

Industrial Internet of Things (IIoT) applications are generating more data than ever before. In many industrial applications, especially highly-distributed systems located in remote areas, sending large amounts of raw data to a central server regularly might not be possible. To reduce latency, reduce data communication and storage costs while increasing network availability, businesses are moving artificial intelligence (AI) and machine learning (ML) to the edge for real-time decision-making and actions in the field.

These applications that deploy AI capabilities on IoT infrastructures are called the Artificial Intelligence of Things (AIoT). Although AI models still training in the cloud, data collection and inferencing can be performed in the field by deploying trained AI models on edge computers. Get started by choosing the right edge computer for an industrial AIoT application.

Bringing AI to the IIoT

The advent of the Industrial Internet of Things (IIoT) has allowed a wide range of businesses to collect massive amounts of data from previously untapped sources and explore new avenues for improving productivity. By obtaining performance and environmental data from field equipment and machinery, organizations have more information to make more informed business decisions. IIoT data far exceeds a human’s ability to process it alone, which means most information goes unanalyzed and unused. Businesses and industry experts are turning to AI and ML software for IIoT applications to gain a holistic view and make smarter decisions more quickly.

Most IIoT data goes unanalyzed

The staggering number of industrial devices connected to the Internet is growing rapidly and is expected to reach 41.6 billion endpoints in 2025. What’s even more mind-boggling is how much data each device produces. Manually analyzing all the information generated by the sensors on a manufacturing assembly line could take a lifetime. It’s no wonder “less than half of an organization’s structured data is actively used in making decisions and less than 1% of its unstructured data is analyzed or used at all,” according to a May-June 2017 Harvard Business Review article, “What’s your Data Strategy?” citing “cross-industry studies.”

In the case of IP cameras, only 10% of the nearly 1.6 exabytes of video data generated each day gets analyzed. These figures indicate a staggering oversight in data analysis despite the ability to collect more information. This inability for humans to analyze all of the data produced is why businesses seek ways to incorporate AI and ML into IIoT applications.

Imagine if we relied solely on human vision to manually inspect tiny defects on golf balls on a manufacturing assembly line for 8 hours each day, 5 days a week. Even with a whole army of inspectors, each person is still naturally susceptible to fatigue and human error. Similarly, manual visual inspection of railway track fasteners, which can only be performed in the middle of the night after trains have stopped running, is not only time-consuming, but difficult to do. Manually inspecting high-voltage power lines and substation equipment also exposes human personnel to additional risks.

Combining AI with IIoT

In each of the previously discussed industrial applications, the “AIoT” offers the ability to reduce labor costs, reduce human error, and optimize preventive maintenance. The AIoT refers to the adoption of AI technologies in Internet of Things (IoT) applications for the purposes of improving operational efficiency, human-machine interactions, and data analytics and management. But what do we mean by AI and how does it fit into the IIoT?

AI is the general field of science that studies how to construct intelligent programs and machines to solve problems traditionally performed through human intelligence. AI includes ML, which is a specific subset of AI that enables systems to automatically learn and improve through experience without being programmed to do so, such as through various algorithms and neural networks. Another related term is “deep learning” (DL), which is a subset of ML in which multilayered neural networks learn from vast amounts of data.

Since AI is such a broad discipline, the following discussion focuses on how computer vision or AI-powered video analytics, other subfields of AI often used in conjunction with ML, are used for classification and recognition in industrial applications.

From data reading in remote monitoring and preventive maintenance, to identifying vehicles for controlling traffic signals in intelligent transportation systems, to agricultural drones and outdoor patrol robots, to automatic optical inspection (AOI) of tiny defects in golf balls and other products, computer vision and video analytics are unleashing greater productivity and efficiency for industrial applications.

Moving AI to the IIoT edge

The proliferation of IIoT systems is generating massive amounts of data. For example, the multitude of sensors and devices in a large oil refinery generates 1 TB of raw data per day. Sending all this raw data back to a public cloud or private server for storage or processing would require considerable bandwidth, availability and power consumption. In many industrial applications, especially highly distributed systems located in remote areas, constantly sending large amounts of data to a central server is not possible.

Even if companies have the bandwidth and sufficient infrastructure, which is very expensive to deploy and maintain, there would still be substantial latency in data transmission and analysis. Mission-critical industrial applications must be able to analyze raw data as quickly as possible.

To reduce latency, reduce data communication and storage costs, and increase network availability, IIoT applications are moving AI and ML capabilities to the edge of the network to enable more powerful preprocessing capabilities directly in the field. More specifically, advances in edge computing processing power have enabled IIoT applications to take advantage of AI decision-making capabilities in remote locations.

By connecting field devices to edge computers equipped with powerful local processors and AI, all data no longer needs to be sent to the cloud for analysis. In fact, the data created and processed at the far-edge and near-edge sites is expected to increase from 10 to 75% by 2025, and the overall edge AI hardware market is expected to see a compound annual growth rate (CAGR) of 20.64% to 2024.

Choosing the right edge computer for industrial AIoT

When it comes to bringing AI to industrial IoT applications, there are several key issues to consider. Even though most of the work involved with training AI models still takes place in the cloud, companies will eventually need to deploy trained inferencing models in the field. AIoT edge computing essentially enables AI inferencing in the field rather than sending raw data to the cloud for processing and analysis. To effectively run AI models and algorithms, industrial AIoT applications require a reliable hardware platform at the edge. To choose the right hardware platform for an AIoT application, consider the following factors.

- Processing requirements for different phases of AI implementation

- Edge computing levels

- Development tools

- Environmental concerns.

Processing requirements for different phases of AI implementation

Generally speaking, processing requirements for AIoT computing are concerned with how much computing power an application needs and if a central processing unit (CPU) or accelerator is required. Since each of the following three phases in building an AI edge computing application uses different algorithms to perform different tasks, each phase has its own set of processing requirements.

Three phases in building AIoT applications

The three phases of building AIoT applications are data collection, training and inferencing.

- Data collection: The goal of this phase is to acquire large amounts of information to train the AI model. Raw, unprocessed data alone is not helpful because the information could contain duplications, errors, and outliers. Preprocessing collected data at the initial phase to identify patterns, outliers, and missing information also allows users to correct errors and biases. Depending on the complexity of the data collected, the computing platforms typically used in data collection are usually based on Arm Cortex or Intel Atom/Core processors. In general, input/output (I/O) and CPU specifications, rather than the graphics processing unit (GPU), are more important for performing data collection tasks.

- Training: AI models need to be trained on advanced neural networks and resource-hungry ML or DL algorithms that demand more powerful processing capabilities, such as powerful GPUs, to support parallel computing to analyze large amounts of collected and preprocessed training data. Training the AI model involves selecting a ML model and training it on collected and preprocessed data. During this process, there’s a need to evaluate and tune the parameters to ensure accuracy. Many training models and tools are available to choose from, including off-the-shelf DL design frameworks, such as PyTorch, TensorFlow, and Caffe. Training is usually performed on designated AI training machines or cloud computing services, such as Amazon’s AWS Deep Learning AMIs, Amazon SageMaker Autopilot, Google Cloud AI, or Microsoft Azure Machine Learning, instead of in the field.

- Inferencing: The final phase involves deploying the trained AI model on the edge computer so it can make inferences and predictions based on newly collected and preprocessed data quickly and efficiently. Since the inferencing stage generally consumes fewer computing resources than training, a CPU or lightweight accelerator may be sufficient for an AIoT application. Nonetheless, a conversion tool is needed to convert the trained model to run on specialized edge processors/accelerators, such as Intel OpenVINO or NVIDIA CUDA. Inferencing also includes several different edge computing levels and requirements.

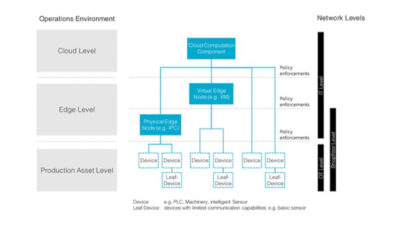

Edge computing levels, architectures

Although AI training is still performed in the cloud or on local servers, data collection and inferencing necessarily take place at the edge of the network. Moreover, since inferencing is where the trained AI model does most of the work to accomplish the application objectives (such as make decisions or perform actions based on newly collected field data), there a need to determine which of the following levels of edge computing are needed to choose the appropriate processor.

Low edge computing level: Transferring data between the edge and the cloud is expensive and time- consuming and results in latency. With low edge computing, only a small amount of useful data is sent to the cloud, which reduces lag time, bandwidth, data transmission fees, power consumption, and hardware costs. An Arm-based platform without accelerators can be used on IIoT devices to collect and analyze data to make quick inferences or decisions.

Medium edge computing level: This level of inference can handle various IP camera streams for computer vision or video analytics with sufficient processing frame rates. Medium edge computing includes a wide range of data complexity based on the AI model and performance requirements of the use case, such as facial recognition applications for an office entry system versus a large-scale public surveillance network. Most industrial edge computing applications also need to factor in a limited power budget or fanless design for heat dissipation. It may be possible to use a high-performance CPU, entry-level GPU, or vision processing unit (VPU) at this level. For instance, the Intel Core i7 Series CPUs offer an efficient computer vision solution with the OpenVINO toolkit and software-based AI/ML accelerators that can perform inference at the edge.

High edge computing level: High edge computing involves processing heavier loads of data for AI expert systems that use more complex pattern recognition, such as behavior analysis for automated video surveillance in public security systems to detect security incidents or potentially threatening events. High edge compute level inferencing generally uses accelerators, including a high-end GPU, VPU, Google Tensor Processing Unit (TPU), or field programmable gate array (FPGA), which consumes more power (200 W or more) and generates excess heat. Since the necessary power consumption and heat generated may exceed the limits at the far edge of the network, such as aboard a moving train, high edge computing systems are often deployed in near-edge sites, such as in a railway station, to perform tasks.

Development tools for AI, ML applications

Several tools are available for various hardware platforms to help speed up the application development process or improve overall performance for AI algorithms and ML.

Deep-learning frameworks

Consider using a DL framework, which is an interface, library, or tool that allows users to build deep-learning models more easily and quickly, without getting into the details of the underlying algorithms. Deep-learning frameworks provide a clear and concise way for defining models using a collection of pre-built and optimized components. The three most popular include:

- PyTorch: Primarily developed by Facebook’s AI Research Lab, PyTorch is an open-source ML library based on the Torch library. It is used for applications such as computer vision and natural language processing, and is a free and open-source software released under the Modified BSD license.

- TensorFlow: Enable fast prototyping, research, and production with TensorFlow’s user-friendly Keras- based APIs, which are used to define and train neural networks.

- Caffe provides an expressive architecture that allows users to define and configure models and optimizations without hard-coding. Set a single flag to train the model on a GPU machine, and then deploy to commodity clusters or mobile devices.

Hardware-based accelerator toolkits

AI accelerator toolkits are available from hardware vendors and are specially designed to accelerate AI applications, such as ML and computer vision, on their platforms.

- Intel OpenVINO: The Open Visual Inference and Neural Network Optimization (OpenVINO) toolkit from Intel is designed to help developers build robust computer vision applications on Intel platforms. OpenVINO also enables faster inference for DL models.

- NVIDIA CUDA: The CUDA Toolkit enables high-performance parallel computing for GPU-accelerated applications on embedded systems, data centers, cloud platforms, and supercomputers built on the Compute Unified Device Architecture (CUDA) from NVIDIA.

AI, ML application location, environmental considerations

Last but not least, consider the physical location of where the application will be implemented. Industrial applications deployed outdoors or in harsh environments (such as smart city, oil and gas, mining, power, or outdoor patrol robot applications) should have a wide operating temperature range and appropriate heat dissipation mechanisms to ensure reliability in blistering hot or freezing cold weather conditions. Certain applications also require industry-specific certifications or approvals, such as fanless design, explosion-proof construction, and vibration resistance. Because many real-world applications are deployed in space-limited cabinets and subject to size limitations, small form factor edge computers are preferred.

Highly-distributed industrial applications in remote sites also may require communications over a reliable cellular or Wi-Fi connection. For instance, an industrial edge computer with integrated cellular LTE connectivity eliminates the need for an additional cellular gateway and saves valuable cabinet space and deployment costs. Another consideration is redundant wireless connectivity with dual SIM support also may be needed to ensure data can be transferred if one cellular network signal is weak or goes down.

AI improves operational efficiencies, reduces costs

Enabling AI capabilities at the edge allows companies to improve operational efficiency and reduce risks and costs for industrial applications. Choosing the right computing platform for an industrial AIoT application also should address the specific processing requirements at the three phases of implementation: (1) data collection, (2) training and (3) inference. For the inference phase, also determine the edge computing level (low, medium or high), to select the most suitable type of processor.

Choose the best-suited edge computer to perform industrial AI inferencing tasks in the field by carefully evaluating the specific requirements of an AIoT application at each phase,

Ethan Chen and Alicia Wang are product managers at Moxa; Edited by Mark T. Hoske, content manager, Control Engineering, CFE Media and Technology, [email protected].

KEYWORDS: AI, ML, industrial edge computing, IIoT

CONSIDER THIS

Consider AI-supported edge computing to support smarter real-time decisions.