The ISA Leadership conference keynote speaker covers reactions by executives and legislators to generative AI and how this could impact cybersecurity professionals.

AI, cybersecurity insights

- Artificial intelligence (AI) includes risks and reward for industrial applications, including for cybersecurity, according to a keynote speaker at the 2023 International Society of Automation Leadership Conference.

- Cybersecurity rules changed with the new Security and Exchange Commission (SEC) rule that includes required reporting within four days of a cybersecurity incident.

While risk protection and industrial process optimization are among the benefits of artificial intelligence (AI), hackers also are using AI in cybersecurity attacks. These were among topics discussed at the International Society of Automation Leadership Conference was help in Colorado Springs, Oct. 3-7. The conference featured digital transformation, cybersecurity and career skills tracks to its attendees.

A keynote speaker, Mark Weatherford, spoke about how local and federal policies on cybersecurity and AI may impact security professionals and how they can prepare themselves for industry changes.

Weatherford has over 40 years’ experience working with in the public and private sector on information security. Beginning as a cryptologic officer for the U.S. Navy, he has most recently worked as the chief strategic officer for the National Cybersecurity Center.

What are the benefits, risks of AI for industrial use?

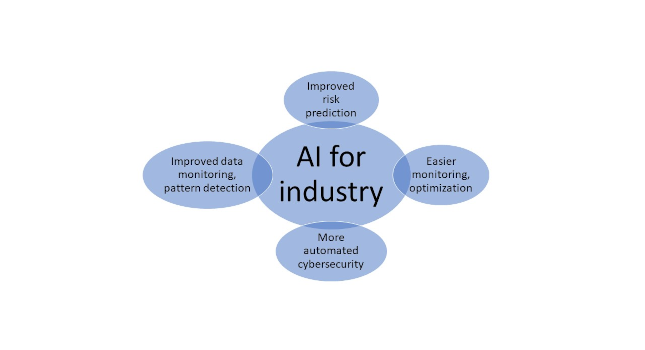

Throughout the talk, Weatherford discussed the value and risk associated with generative artificial intelligence (AI) for industrial cybersecurity. He cited better risk prediction, easier monitoring and optimization industrial processes, increased automation of security tasks and the ability to analyze large volumes of data to detect patterns and prevent attacks as primary AI advantages.

As for the negatives, generative AI can be used as a tool helping hackers and cyber criminals just as easily as it can help companies and organizations. With obvious examples like deepfakes and synthetic media, generative AI creates a real risk of more sophisticated adversarial attacks.

As labor shortages continue in many positions in many industries, AI’s ability to work faster and more accurately than people for some applications may mean that certain jobs will be outsourced to AI.

“Businesses aren’t benevolent so if they can replace you with something cheaper and faster, they will,” Weatherford said. “If you’re sitting back and watching it happen passively, you’ll be left by the wayside.”

Guiding AI to be helpful for industrial applications

However, this doesn’t mean that everyone’s career is over. Instead, workers will need to understand how to become an expert in using AI by asking the right questions, generating useful reports out of the mountains of data and helping companies apply AI effectively and safely.

As companies and individuals become more aware of the power and danger of AI, it has become a big talking point in the government as well. However, many legislators are uneducated on cybersecurity and technology as a whole.

Cybersecurity breach: Four-day reporting requirement from SEC

Throughout his career in the public and private sectors, Weatherford has met with federal and local officials to educate them and begin to unpack the question of what the government’s role is in cybersecurity. Officials have already begun to create new laws surrounding cyber-attacks, with the most notable being a recent Security and Exchange Commission rule that mandates reporting of a cyber account within four days of occurrence.

This rule is controversial within the cybersecurity industry because, while it represents government care, it also creates what Weatherford called time-consuming red tape for companies of all sizes. Weatherford cited a recent Clorox incident that occurred Aug. 14, as an example of this. The initial report was issued that day, but updates were released at least every two to three days, until Sept. 18. The frequent reporting took time and resources away from resolving most immediate details connected to the issue.

Much like the career issues that individuals may face, the policy issues can be addressed by more education and discussion between officials and experts. Weatherford urged the audience to speak up and connect with their companies’ C-suite and their government officials.

“The importance of employees in the cybersecurity equation is critical,” Weatherford said. “A culture of security awareness means engaged and informed employees.”

While it may seem that AI is going to replace people at all levels of a job, Weatherford argues that instead, it will create a restructuring of the workplace and of cybersecurity. To create safeguards and prevent attacks, cybersecurity professionals are responsible for understanding how to use and protect against new technologies. They also should make themselves available to officials and executives to ensure that public and private policies are as strong and helpful as possible, he suggested.

See Control Engineering cybersecurity topical page:

www.controleng.com/system-integration/cybersecurity

See more from the CFE Media and Technology publication, Industrial Cybersecurity Pulse: