Distillation columns and precise composition control: Composition control is critical for economical operation of distillation columns. Model-based control techniques prevent purity problems associated with dead and lag times.

For precise composition control, on-stream process analyzers often are employed to measure composition of the critical product, such as distillation columns, among the most common unit operations for separation and purification in process industries. The purity of product streams is critical for economical operation of the column.

Modern control techniques have been developed to utilize analyzer measurements from gas chromatographs (GCs) for closed-loop, distillation column product composition control.

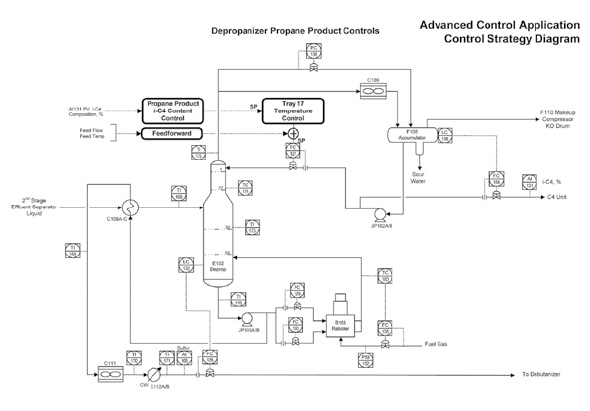

For example, consider the depropanizer shown in the accompanying figure. We’ll assume that the key product quality variable is the iso-butane (i-C4) content of the propane (overhead) product, which is being measured by an on-line GC. Maintaining i-C4 content just below its maximum specification limit maximizes propane recovery. The reflux flow is adjusted in cascade from a tray temperature controller, indirectly maintaining propane product composition. The operator then adjusts the tray temperature setpoint based upon feedback from the analyzer.

In practice, the tray temperature control may not work properly. The most common reason is dead and lag times between change in reflux flow and their impact on temperature. However, utilizing advanced regulatory control (ARC) techniques, such as feedforward, this cascade can work.

The next step is to implement a process identification model (PIM) and close the loop between the analyzer and tray temperature controller. Because the sample could have been injected into the analyzer up to 40 minutes prior, the PIM should be time adjusted for the tray temperature and analyzer reading. In the case of this depropanizer, a simple dead time and lag transfer function adequately delayed the temperature so that it lined up with the composition. Analysis of trend data indicated that a dead time of 34.5 minutes and lag time of 14 minutes provided a suitable match between the two variables.

We can relate the delayed tray temperature to the product composition with a simple, generally linear model:

(Temperature)delayed = K * (Composition) + Bias

Each time a new analyzer reading comes in and is validated, the controller uses the equation in two steps: It predicts the product composition based on the delayed actual temperature and then compares it with the analyzer results. Based on the error calculated from the difference of these measures, rules are used to determine a new equation bias. The equation is then inverted to calculate the new temperature controller setpoint, which is downloaded to the temperature controller. Note that control action is taken only when a new reading comes in from the GC.

This technique works well when there is an intermediate control variable, such as a tray temperature (inferential of composition), that can be correlated in time with the analyzer reading. Since feedback from the analyzer is model-based, there are no inherent shortcomings associated with the long dead time and lag, unlike conventional proportional-integral-derivative (PID) feedback control.

Jim Ford is process control consultant at Maverick Technologies.

ONLINE

Check out more Real World Engineering blog advice: www.controleng.com/blogs