Not long ago, building a digital control system for a real-time application was relatively simple. You started with whatever real-time operating system (RTOS) you were most enamored with, selected a microcontroller that was 1) supported by that RTOS, and 2) had price, performance, I/O features, and memory that met your application needs.

Not long ago, building a digital control system for a real-time application was relatively simple. You started with whatever real-time operating system (RTOS) you were most enamored with, selected a microcontroller that was 1) supported by that RTOS, and 2) had price, performance, I/O features, and memory that met your application needs. Then, you wrote application software that would take advantage of your RTOS’ features to best guarantee that your controller would do what it needed to do when it needed to do it.

It’s not that simple, anymore. In a sense, multicore microcontroller technology and software virtualization make the embedded system and motion-control design engineer’s job more complex. In many ways, however, they make the job easier. Getting to the bottom of this paradox requires a basic understanding of RTOS, multicore, and virtualization. Let’s start with basic RTOS technology.

Real time operating systems live and die by handling interrupts.

On time, every time

Wikipedia says that:

“A real-time operating system (RTOS; generally pronounced as “are-toss”) is a multitasking operating system intended for real-time applications.… An RTOS facilitates the creation of a real-time system, but does not guarantee the final result will be real-time; this requires correct development of the software. … An RTOS is valued more for how quickly and/or predictably it can respond to a particular event than for the given amount of work it can perform over time. Key factors in an RTOS are therefore a minimal interrupt latency and a minimal thread switching latency.”

An RTOS makes it possible to program mission-critical tasks in a multitasking environment. In non-multitasking situations, without multiple tasks vying for computing-system resources, everything is done in real time. That is, when an event happens that demands CPU attention, it gets CPU attention right away. Since the CPU has nothing else to do, nothing gets in the way.

In a multitasking environment, where the CPU must multiplex between a number of tasks, real time means something. When an event occurs that demands CPU attention, the CPU may already be busy doing something else. It can’t react to the event instantaneously. It has to put what it’s already doing aside in some fashion that allows it to go back after reacting to the interrupting event. Then, it has to react to the event. Finally, it has to pick up the thread of what it was doing before.

An industrial RTOS lives and dies by servicing interrupts marking events. An interrupt is a signal sent in by an external agent that pulls a CPU interrupt line high and (for most CPUs) holds it high until the CPU acknowledges the interrupt. The CPU is supposed to respond within a certain time to an interrupt—its deadline.

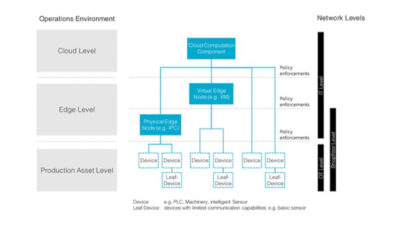

A hypervisor sits between the hardware and one or more instances of operating systems.

Virtual world

Operating systems (OSs) are software. Software is all about bit patterns and manipulating them. To software, hardware is just a bunch of bit patterns defining locations in address space and data words temporarily associated with those locations.

Virtualization relies on the fact that software doesn’t know where those patterns come from, or where they go to, nor does it care. It just deals with the patterns as they appear.

Suppose you have one very fast computer, but what you really need is two computers that aren’t quite that fast. Maybe you want to run Microsoft Vista on one of those computers (that you don’t have), and Wind River’s VxWorks RTOS on the other. Vista might provide the human-machine interface (HMI) and automatically archive data to a server in, say, Pakistan over the Internet, while VxWorks controls your process in real time.

You can use software virtualization to “clone” the fast processor you have, and turn it into two identical virtual processors that don’t run quite as fast. Run Vista on one virtual processor, and VxWorks on the other.

Virtualization software designed to make this possible is called a “hypervisor.” Hypervisors sandwich in between the hardware and multiple OS instances. The hardware, looking at the hypervisor at any given time, thinks it’s seeing an ordinary OS. Each OS, looking at the hypervisor from its viewpoint, thinks it sees hardware.

The hypervisor has three jobs:

It provides access to the hardware—including processor, memory, and I/O—to multiple OSs. It makes sure otherwise incompatible OSs play nicely together. Hypervisors are designed to support real-time operations. That is, they partition the available hardware resources so as to maintain the RTOS’ determinism.

It provides isolation between OSs, including data and system security features, so that, while a hacker getting into the server in Pakistan may be able to reach over the Internet and see your HMI running on Vista, they cannot get to your control system running on the RTOS, even though it’s on the same processor.

It provides secure data sharing between OSs.

Note the term “instances”; it is critical to understanding virtualization. One may have multiple instances of the same OS. For example, you can establish multiple RTOS instances, each supporting a separate control loop. You could also have two desktop (non-real-time) OSs, one to connect to the Internet and the other for running office applications in a secure environment. Should hackers penetrate the Internet-connected instance, only data files associated directly with that instance may be compromised.

The hypervisor provides a firewall to protect shared files. One can clean up malware infecting an OS instance simply by deleting the affected instance. Data shared with the second instance will be unaffected. To reconnect to the Internet, simply start new Internet-connected OS instance.

Finally, hypervisors make it possible to mix real-time and non-real-time OSs. In such a situation, the hypervisor works to maintain the RTOS’ determinism—even at the expense of the non-real-time OS’s performance. This is of obvious value to control engineers, whose systems often have multiple tasks running simultaneously with a range of determinism needs.

Multicore hardware

Multicore microcontroller technology is a fully integrated version of multiprocessing architecture. Multiprocessing hardware consists of multiple CPUs sharing memory space. Such technology has

Symmetric multiprocessing systems have multiple identical CPU cores that share memory and other resources.

been around for decades in the form of multiple microprocessors interconnected at the board level to shared I/O and memory resources.

Over the past few years, semiconductor manufacturers have brought out increasingly sophisticated semiconductor devices that pack multiple CPU cores onto a single die. This makes integration much tighter, and data communication much faster. The term for hardware exhibiting this kind of tight integration is “multicore.”

Multiprocessing architectures divide into two types: symetric multiprocessing (SMP) and asymmetric multiprocessing (AMP). SMP uses a number of identical (i.e., symmetric) CPUs, while AMP uses CPUs that are not all the same.

Most commercially available multicore devices use SMP architecture. AMP architectures tend to be application specific, so it is difficult to achieve large production volumes that drive cost-per-device down.

Dual-core SMP devices, which have two identical CPU cores integrated into the same IC, are now readily available, if not common. Quad-core SMP devices, which have four indentical cores, have also been available for several years. More recently, Freescale Semiconductor introduced an 8-core microprocessor device for communications applications. Other semiconductor producers have at least talked about products with many (in some cases very many) more cores.

Perhaps the most obvious benefit of multicore integration is a very “green” effect. Integrating N CPU cores on one die allows a quantum leap (roughly about 0.8 N ) increase in overall processor speed with no increase in clock rate.

Ray Simar, advanced architecture development manager at Texas Instruments, described a three-core processor with all three cores running at 1 GHz that provided the computing power of a single processor running at 3 GHz. He then pointed out another IC incorporating six cores, each running at 500 MHz that provided the same computing power, but at a fraction of the power usage.

Assymetric multiprocessing systems include multiple CPU cores that are not all identical.

Multicore technology also makes it possible to divide computing tasks into several parts, a strategy which has special application to control systems. For example, one core of a multicore device might be dedicated to running a tight control loop in real time, freeing up other cores to multitask less time-critical jobs that might together amount to a significant computation load. Without the ability to segregate the control loop on a dedicated core, it would have to compete with other tasks for CPU attention.

Putting it all together

Events surrounding the June 2008 introduction of Freescale’s QorIQ P4080 8-core SMP device illustrate the many ways RTOS, virtualization, and multicore technologies unite to give control engineers new system-architecture options. The announcement prompted immediate—indeed, effectively simultaneous—follow-on announcements by a number of embedded-system software companies.

Green Hills Software demonstrated its Multi IDE, which can debug 8 cores on Freescale’s processor running on a Virtutech Simics system simulator. The demonstration also included Green Hills Integrity RTOS with Padded Cell hypervisor hosting applications and guest operating systems. Virtutech announced a hybrid simulation capability to support multiple levels of model abstraction within its Simics simulation environment.

Wind River Systems announced its own software development solution to provide pre-silicon support for both VxWorks and their Linux distribution on the multicore device, along with the company’s Eclipse-based Workbench development suite, running on Virtutech’s Simics simulator.

For control engineers, these trends mean they no longer must tailor their application to the constraints of available hardware and software. The are now free to create virtual systems with the performance and characteristics they want.

With freedom, however, comes responsibility. Having more choices means you now have to make more choices. It also requires control engineers to learn about the new techniques and technology in order to make good choices.

Author Information

C.G Masi is a senior editor with Control Engineering. Contact him at [email protected]