Hybrid artificial intelligence (AI) acts as a companion technology to data-driven machine intelligence methods and can help edge computing evolve.

Learning Objectives

- Edge computing systems are designed with varying, and often limited, levels of capability and need to be close to the action to be effective.

- Hybrid AI acts as a companion technology to data-driven machine intelligence methods and can improve edge computing.

Artificial Intelligence Insights

- Artificial intelligence (AI) is used in many different industries to provide users with greater insight and information about day-to-day operations.

- Edge computing software integrates AI to deliver better insights, but it is limited in how much it can do.

- Hybrid AI acts as a companion technology to data-driven machine intelligence methods and can improve edge computing by overcoming the limitation boundaries in reasoning, sequential planning and providing actionable feedback for the user.

Artificial intelligence (AI) is being developed and deployed across a range of industries. It has enabled many firms to capitalize on new opportunities, create business models and gain competitive advantages. For industrial, energy, defense, healthcare and financial segments, AI is becoming a core differentiating value driver to firms’ abilities to compete effectively in their respective market categories.

Take the Internet of Things (IoT), for example. By 2025, forecasts suggest there will be more than 75 billion IoT connected devices in use, a nearly threefold increase from 2019. As this number continues growing exponentially, connecting all these devices with intelligent capabilities will be one of the biggest challenges to reaching its full IoT potential.

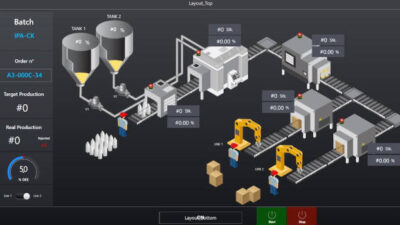

As the technology capabilities of devices and systems have matured, nearly all objects in the physical world can have connectivity and computing natively built-in or retrofitted. Expectations from users have also grown. Users demand more from their experiences and interacting touchpoints, with similar user-friendly experiences resembling their smartphones and laptops. The machine learning user experience, MLUX (ML + UX), needs to be carefully designed and deliver a positive, impactful, contextual and holistic user experience with minimal friction points to further augment and promote adoption.

Edge computing systems are evolving

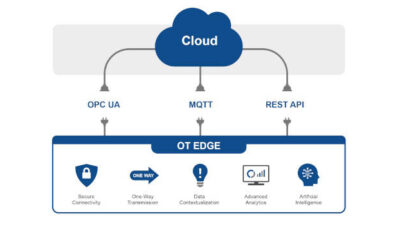

Edge systems are designed with varying, and often limited, levels of computer processing budgets, storage and memory footprints, network connectivity and operating constraints. The edge paradigm moves to process locally to where the data is sensed, generated and acted on; although, in select scenarios, its cloud-like capabilities can be extended further with edge gateways to offer low latency and high bandwidth utilization.

Across the spectrum, hardware families can span resource-constrained ultra-low power bare-metal devices, RTOS microcontrollers (e.g., ARM Cortex-M), and SoC application microprocessor (e.g., ARM Cortex-A) profile capabilities. The original design manufacturer/original equipment manufacturer (ODM/OEM) licensed application profile targets are often found in smartphones and tablets and/or as application processors connected to external microcontrollers, sensors and actuators, or baseband processors. Within this spectrum, advanced specialized AI accelerator ASIC devices have been designed to handle high-performance AI operator instructions and supported state-of-the-art (SOTA) models. Although the silicon adoption implementation will lag that of the AI research, it comes with the benefit of improved performance, which indirectly links back to the end-user experience.

Prior to the deployment lifecycle, many of these SoTA AI models need to be further optimized and compressed without degraded target performance. As the field has grown, model deployment to embedded targets has shifted towards an embedded systems paradigm, where code is cross compiled on a host computer for the target.

Instead of deploying smaller parameterized distilled models, trading off with targeted performance, to execute on hardware backends, users can compile and optimize those to the processor target and device execution via an intermediate format (MLIR) and runtime execution environment. This also links back to delivering improved lower latency and user experience.

During operation execution, the AI edge systems need to have some level of independence, yet still make high performant decisions despite their limitations, until they can take advantage of more powerful resources and the fleet of data and knowledge from the connected cloud infrastructure. For connected devices, this state of independence is called emergency mode.

Improving the edge with hybrid AI

Using hybrid AI is a potential pathway to advance edge AI. the status quo. Hybrid AI acts as a companion technology to data-driven machine intelligence methods, supplementing cognitive symbolic AI capabilities, overcoming the limits in reasoning, sequential planning, actionable feedback, and human-like understandable explanations and interpretation. A core focus is on the collaborative interaction with the user, acting as a contextual decision support advisor and diagnosis system, particularly in situations associated with high risk, uncertainty and unknowns.

Example: Within NASA JPL, hybrid AI was developed for Mars Rover landings adding reasoning intelligence to improve navigation in unfamiliar situations and difficult terrain where traditional techniques have difficult.

By using hybrid AI, systems can be enriched with guidance from encoded human expert knowledge and constraints, industry guidelines, and best practices along with the traditional supporting historical and streaming event information. These cognitive engines and algorithms model hypothetical paths and scenarios to propose courses of action and compose intelligent near real-time decisions, even in conditions like those on the edge considered less-than-ideal in terms of operation, acquisition, and access to quality data.

Examples: Consider use cases for edge devices with limited or spotty connectivity, operating in harsh or remote environments, with long tail data distributions, limited by their data acquisition and storage. Or the unhappy path when devices trigger periods of malfunction, have conflicting data over temporal periods from sensor fusion and maneuvering higher risk error conditional paths. These are selective examples where traditional Big Data and fine-tuning methods don’t quite fit. Hybrid AI can facilitate these conditions directly as part of the recommended action plan and the user feedback loop, or indirectly via underlying model correction implementation utilizing knowledge and constraints.

Using hybrid AI in IoT, and in other systems that operate at the edge, unlocks a new potential for connectivity, user experience, and costly decisions for operations. The vision of the future for AI includes cognitive systems that can accomplish tasks where traditional machine learning systems fall short at the edge of the network and beyond the larger ecosystem and value chain: Intelligently and fluently interacting with human experts, providing articulate actionable explanations, and enhancing user trust and confidence in decision making.

Ari Kamlani, senior AI solutions architect and data scientist at Beyond Limits. Edited by Chris Vavra, web content manager, Control Engineering, CFE Media and Technology, [email protected].

MORE ANSWERS

Keywords: edge computing, hybrid AI, artificial intelligence

ONLINE

See additional edge computing stories at Edge and Cloud Computing

The CFE Media and Technology New Products for Engineers Database provides more information about AI software including:

Beyond Limits Formulation Advisor New Products for Engineers

Beyond Limits Luminai Refinery Advisor

CONSIDER THIS

What potential benefits can AI and edge computing provide to your applications?