Embedded software development, a component of controls systems and mechatronics, differs from other engineering in key ways, according to respondents to recent Cambashi research interviews.

Software has been an important component of control systems for many years. Control system software continues to grow in importance. For example, a faster processor in a control unit may allow a product to perform better and reduce costs by using simpler, lower cost mechanical parts, but only if the sensor and actuator control software are good enough. Software intensive, networked electronics are becoming increasingly central to the performance of products of all kinds, from industrial to consumer. More engineering teams are facing questions about the best way to handle software development as a key part of product development projects. [This includes mechatronics-based design, those integrating mechanical and electronics, including embedded software.]

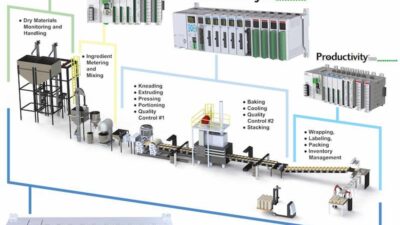

Software development engineers, methods, and tools can be integrated into product development teams. Examples include industries and product types such as medical devices, radar subsystems, transportation equipment, production monitoring, aerospace, and communications. Approaches used by some of these companies can aid software development in new product introduction projects.

Software engineers are people

Company A’s head of engineering judged all development methods in use, software and hardware, in relation to a “good engineering” benchmark, which included proper framing of requirements, proper information and investigation of problems, and proper sign-off and communication of changes. The development team was modestly sized (tens, not hundreds). After some years of being distributed across multiple sites, this team (for hardware and software) had been centralized at one site. The most important engineering management tool was the Monday morning meeting of group leaders.

The company B development team was distributed across the world. Even so, during the one or two hours daily that spanned normal working hours for everyone on the team, team members would run a 45-minute online (headset, voice over IP, screen-sharing) exception reporting meeting. The development leader confirmed he had been tempted to use the company’s issue tracking system in place of this meeting, but was glad to be forcing direct contact among group leaders involved.

In both cases, direct communication among people was perceived as reducing the chance of misunderstanding and causing better articulation and prioritization of potentially complex follow-up actions. However, the products and teams involved were in many ways an outlier. These two teams handled mainly software; the electronic and mechanical parts of their products were relatively static, and not the key source of competitive advantage. The engineering was not very different from software-only development teams—the hardware could be treated as a special peripheral device. But these teams’ success using old-school, person-to-person communication as a core part of the development process is worth remembering.

Software is different

For products in which hardware is a larger part of the overall project, there were a range of approaches.

Company C highlighted issues relating to project management. The growth of software as a product development technology had been quite rapid and represented more than half of new product development costs and “nearly all the problems” at the time of the interview. Yet, going back 20 years, embedded software had been restricted to a few high-end products, provided by specialist subcontractors. Current project managers were mainly engineers who had been with the firm for many years. The hands-on experience of these people was largely electronics hardware development. The development organization used a product-team structure. This created a mismatch between project managers (mainly hardware development backgrounds), and 2-10 software engineers in the 5-20 lead product development teams. Though no crisis, project managers felt uncomfortable when they were unable to use their own experience to guide and question decisions, priorities, and especially time estimates provided by software people.

Company D had an organizational solution for these problems. It had similarly chosen product teams as the basic unit for development projects. Like company C, each product team contained a mix of specialists—mechanical, electrical, electronic, software. In recent years, company D gave the electronics and software people an additional “home” by grouping them into one “control-systems” unit, then assigning them back into product teams, but as a slightly autonomous control-system team. Overall management of objectives, tasks, and priorities derived from the product team. The control-system team leader in the product team (with a lot of autonomy over the hands-on tools and methods used by that particular control-system team) provided day-to-day management.

At the time of the research interview, organization D was aiming to make more use of the control-systems unit to develop more consistent use of tools, standards, and libraries and to find ways to achieve better levels of reuse (of everything, from know-how to architectures to platform electronics and software).

Central, not standard

Companies E, F, and G described centralized software organizations with names such as “software competence center” and “embedded software,” containing tens or hundreds of software engineers. Each product development project involved senior people from the software organization as part of a multidisciplinary project design team. Team output included outline architectures (with broad agreement on what would be in hardware and in software) and agreement on how to resource the project. Usually this meant a specification was taken back to the software team for implementation; sometimes it might be a temporary assignment for software engineers to work alongside the electronics team.

Projects inside these companies’ software organizations were not isolated; there was continuous communication with non-software people. But the buzz in the teams came from a pure software focus. For example, the teams were free to experiment and develop agile methods. The skill sets and technologies covered were diverse rather than standard, being driven mainly by the nature of the product and the underlying electronics, which would eventually run the software. However, the synchronization between software development and other technologies was “guaranteed” by strict stage-gate or similar processes. By whatever methods, the software team had to deliver exactly what was listed as the required software artifact for each stage-gate decision process.

Tools and techniques

Company H had a very clear view about tools and procedures it needed for software development, and the ways these should integrate into its overall product development system. The basis of its thinking was that there should be two separate streams. One stream included all management systems—product lifecycle management (PLM), application lifecycle management (ALM), workflow, issue tracking, source code management, configuration management, change control, and test management. The second stream covered the technical tools—requirements management, modeling systems, integrated development environments for software, compilers, loaders, static and dynamic analysis tools, simulation systems, and test development.

A critical issue identified by company H (and shared by other companies researched) is how to bring together management tools, especially PLM and ALM, to provide a consistent picture. PLM technology earned its place in the toolbox through managing mechanical and electronics projects, and has often been implemented in parallel with organizational change to break down silos and achieve a “product-lifecycle” way of thinking. Very similar comments apply to ALM technology, except its origins are in software development. The expectation is that PLM vendors will ultimately adapt their systems to handle software in more fluent and flexible ways. But engineering managers recognize this is not a simple process for a range of reasons. Some reasons involve underlying approach and methods.

For example, software people are used to change happening at one or two orders of magnitude faster than hardware engineers. This is even built into development methods, in which the value of early and many prototypes seen in software is at odds with methods in hardware development (for many years aimed at reducing the number of physical prototypes). Some reasons are more technical; for example, consider the bill-of-materials structures at the heart of PLM solutions. “Finished” software files (that is, ready to flash into a memory chip) can be managed using these structures just like any other component. However, the source code for software is often a less comfortable fit, when sub-trees of source code spread across multiple files may need independent revision histories, and parameterized software build procedures may create different deliverables from the same source code or model-based software definitions.

Where’s the action?

The systems engineering V-model is alive and well. Many respondents, not just those from the systems engineering strongholds of automotive, aerospace, and defense, explained they use it as a map of the artifacts that must be created in a project, noting, “the V is not a workflow.” The most-often-cited example involved testing. The V-model acts as a useful reminder that every requirement developed during the requirements cascade going down the left-hand arm of the V must be matched by a suitable test/verification/validation procedure on the right-hand arm of the V. This leads to initiatives on tracking and traceability to track exactly which upstream requirements relate to which test specification.

Regulations affecting development methods exist in many industries; medical device design and manufacturing is a good example. Here, lowering the cost of obtaining and maintaining the right certifications is high priority. On the software side, this means the capability of ALM to provide the evidence that approved development methods were followed is highly valued alongside any productivity improvement that ALM may offer.

Future: Modularity, interfaces

Software teams are among those required to achieve more with less—more configurations, faster change of products, and fewer software engineers. This means more reuse is needed, and this means modularity, standard interfaces, and the use of “external” software components—for example, the software stack to operate a sensor. Strategies such as common platforms are relevant to software as well as hardware to organize these efforts.

The discipline of product-line-engineering (essentially, standard parts, and part count reduction across the scope of a product family or whole product portfolio) addresses this reuse objective. Respondents pointed out how difficult it is to retrofit reuse into existing software. Some reported better software reuse after they moved from source code to models as the core representation of software (source code or binaries are generated more-or-less automatically from the models). However, while the use of model-based-software-development was established and even routine for production software in the automotive sector, other sectors limit the use of models to documentation, early design, and prototyping.

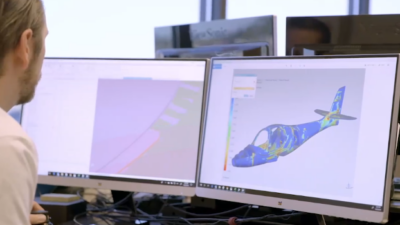

It is not hard to get nodding agreement with a vision of convergence of technical tools from electronic design automation (EDA), mechanical, electrical, and software disciplines resulting in flexible multi-disciplinary models. However, the expected form this convergence will take varies enormously, from an integration of independent point tools using sophisticated workflow and data interfacing techniques, to a brand new technical toolset in which requirements are simply parameters to a technology configuration model.

But at least the vision for the way these models will change software development is consistent. Software will start life as an initial high-level outline architecture proposal that is part of the overall model. This architecture will be developed seamlessly into something that can be simulated, then further developed into something that can be executed, and eventually into the final deliverable. Glimpses of how this can work can be seen in some system-on-a-chip development tools, which allow electronics designers to delay choosing the boundary between hardware and software until very late in the project, and also in the simulation world, with hardware-in-the-loop and software-in-the-loop simulation. When the use of these sorts of tools is commonplace, software engineers will still be different, but perhaps a bit less so.

– Peter Thorne is managing director for Cambashi. He is responsible for consulting projects related to the new product introduction process, e-business, and other industrial applications of information and communication technologies. He has applied information technology to engineering and manufacturing enterprises for more than 20 years, holding development, marketing, and management positions with user and vendor organizations. Immediately prior to joining Cambashi in 1996, he headed the U.K. arm of a major IT vendor’s engineering systems business unit. Edited by Mark T. Hoske, content manager, CFE Media, Control Engineering, Plant Engineering, and Consulting-Specifying Engineer, [email protected].

ONLINE

Research: Embedded Software Development Tools

Planning cuts automation project risk