A paradigm shift toward industrial edge and cloud computing.

Most industrial automation applications demand high reliability and availability for control devices and associated input and output (I/O) elements. The first discrete programmable logic controller (PLC) by Modicon (now Schneider Electric) was introduced in 1968 and Allen-Bradley, in 1971, coined the term PLC. Since then PLCs have been widely adopted as the means of control in production lines in the manufacturing industry. Although they generally employ an array of PLCs to execute I/O controls precisely, each PLC needs communication ports and a controller unit, making it bulky and expensive. It is also expensive to update programs once deployed.

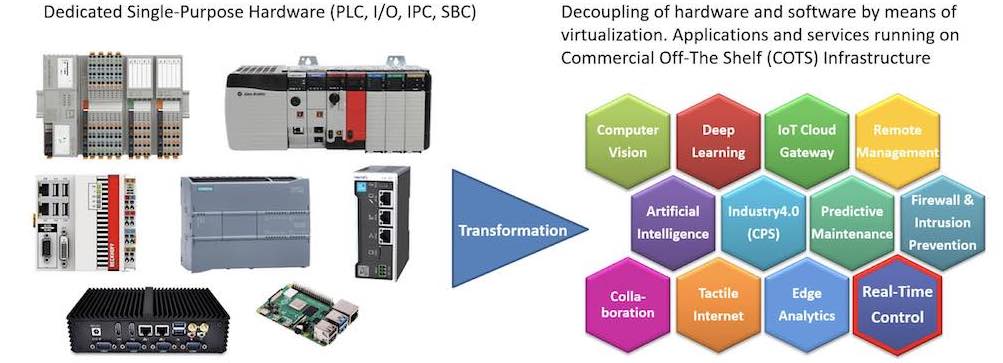

Virtualized PLCs (vPLCs) on commercial-off-the-shelf (COTS) infrastructure can replace a large number of individual controller “boxes” and their electronics. This is edge computing, where information technology (IT) cloud infrastructure is located close to the machines on the shop floor. This makes it possible to meet the stringent requirements of very low latency and short control cycles. This article assumes the edge computing infrastructure is located on premise within the factory or plant. This enables a paradigm shift, interconnecting a large number of vPLCs on an edge node to improve operational efficiency. Although vPLCs have been only sporadically adopted, this architecture could gain importance with the advent of edge computing. In data centers, virtualization of databases and applications on powerful servers have long been state of the art.

Virtualization is the ability to separate logical functions (software) from the physical device and to run on commodity hardware virtualization has reduced costs, increased flexibility and scalability, and improved reliability and performance in IT. Over the past decade, we have seen many vendors supporting their supervisory control and data acquisition (SCADA) and distributed control system (DCS) platforms within a virtualized environment, leading to more virtualization in operational technology (OT) environments.

More recently, we have seen a number of DCS vendors deploy virtualized controller CPUs in situations when they need to increase performance beyond the capabilities of their current line of controllers and to reduce the cost of controllers for strategic accounts. These virtualized DCS controllers run on commodity IT hardware such as Windows or Linux servers.

Integrated, edge-based architecture

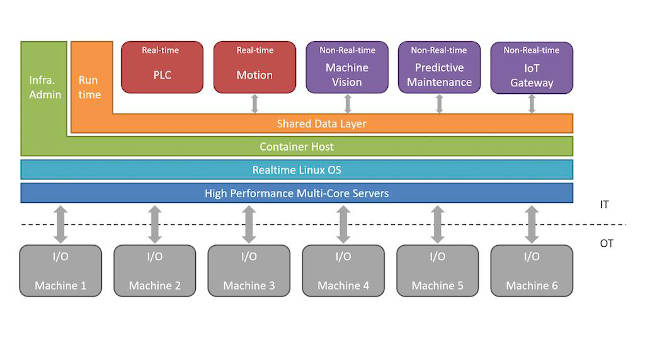

The following situation is conceivable: discrete PLCs are removed from the shop floor and their control functions are hosted in an edge data center in the form of vPLCs, with suitable computing capacities and network connection to the automation system. Servers can already process hundreds of vPLCs at the same time, but standard IT servers with virtualization and shared resources for network and storage cannot adequately address OT industry requirements for reliability and real-time behaviors. For this, industrial edge servers are required that can run real-time workloads. Only the I/O stays local to the machines, sensors, actuators and drives. Some examples of industrial workloads including real-time control are shown in Figure 1‑1.

With no functional changes, an integrated architecture dramatically decreases capital and operational expenditures compared to a decentralized architecture based on individual PLCs, since a large number of virtualized PLCs can be hosted on a single server.

A clear difference between vPLCs and conventional PLCs can be seen in the flexibility and expandability. By virtualizing control functions and running them in the edge data center, interactions between virtual controllers become simpler. The communication among virtual controllers can be implemented by functional calls within a single server, which increases reliability and scalability compared with the traditional communication between physically separated PLCs. It facilitates update and re-design of the production line. With virtual control functions running in the data center, it behaves as a “digital twin” of the production line, which helps to simulate and predict the behavior of the physical counterpart.

The possibility of accessing the data from the field level in the edge data center means that controls and data analytics can be carried out in real-time, which is ideal for diagnostics, maintenance, optimization and intelligent reactions to changes in the automation system. The big data analysis doesn’t run “on” but “parallel to” the controls on the same edge servers. Therefore, modern AI and machine learning algorithms could be applied here without interfering with the existing control process. Since the control functions are to be hosted on the same edge infrastructure, feedback loops from data analytics to the control can also be implemented here, thus opening up new optimization options.

Four implementation challenges and considerations

Why is this architecture not (yet) prevalent? Surveys have identified four arguments:

- The current architecture is tried and tested: The cost advantages, flexibility and optimization are desirable, but the gains are not worth it. “Never touch a running system” except when there are significant gains in capital and operational expenditures.

- Service-level agreement and liability: The factory and plant operators bought a “closed” solution from the vendor or system integrator. This often has closed interfaces, so the machine cannot be adapted to a different control architecture. If the machine is not working as expected, the vendor or system integrator must fix it.

- Technological risks: The reliability and determinism of integrated server platforms are not trustworthy enough to outsource critical control functions to them. The response time of controllers in an edge data center may also be unreliable due to the network.

- Organizational hurdles: To implement an integrated platform of this kind, the control specialists need new skills. The distribution of competencies in the company is often incompatible with an integrated architecture.

The advantages of virtualization of control at the edge must be judged for each application. Edge computing infrastructure is worthwhile for applications that place high demands on flexible production processes and reactive process changes as part of an Industry 4.0 strategy (batch size one).

The technological risks are challenging, but recent developments show what is already possible:

The advantages of virtualizing the control at the industrial edge must be judged for each application. In real-time data acquisition and edge analytics, advantages can be found in applications that rely more on flexible production processes and reactive process changes as part of an Industry 4.0 strategy (batch size one). Because of its flexibility, an edge computing infrastructure is worthwhile for applications that place high demands on dynamic changes in automation. The technological risks are quite challenging, but some recent developments show what is already possible here:

- Real-time operation systems and hypervisor solutions already offer mechanisms for guaranteed robust partitioning of resources such as CPU cores and cache for current multicore CPUs, so instead of virtualization and the associated runtime fluctuations, real-time performance like ‘bare metal’ can be achieved. The more cores, the more real-time applications can be run simultaneously and independently of one another.

- Hardware-supported network virtualization of the local Ethernet interface(s) enables several applications to use the network resources on the same server independently of one another and required bandwidth in the network is available in real time. Time-sensitive networking (TSN) and deterministic IP provide the mechanisms in the network to transport real-time data from different applications independently and without interference with guaranteed latency through an Ethernet network.

- For various fieldbus protocols such as Ethercat and Profinet, specifications are already available to define “tunneling” via TSN. This enables I/O at the field level to communicate with the vPLCs in the edge data center through one network, as if they were directly connected via the fieldbus.

- There is a strong trend towards manufacturer-independent interfaces for application and management. The current specification work in the Motion Working Group of the OPC Foundation aims to ensure vendor-independent interoperability between controls and motion control devices (drives, I/O) based on OPC UA Publish/Subscribe. Similar standards for manufacturer-independent interoperability based on OPC UA and TSN have already been adopted by other industrial consortia and associations (e.g. Euromap and OPAF). Manufacturer-independent management interfaces may also play a role.

With these mechanisms, technologies and standards, the integrated and edge-based architecture can be implemented, as it already is in various test beds and demonstrators.

Nothing changes in the model of the control with regard to programming and runtime behavior: The IEC 61131 programming model can be used unchanged, even if the resulting control application is implemented as a virtualized PLC and only communicates with the field level via fieldbus interfaces. Meanwhile individual developments are already aimed at expanding the integrated architecture in accordance with the requirements of IEC/EN 61499 for distributed controls.

The organizational challenges are non-negligible. The configuration, commissioning and maintenance of an edge platform for hosting of controls is a different task from programming and operation of a PLC. Therefore, the lifecycle of the control applications remains the domain of the experts for these applications as before, while the IT department takes care of the installation and maintenance of the edge servers and the hosting infrastructure running on them. The essential interfaces, which ensure robustness and determinism in accordance with the requirements of the automation industry, must be contained in a certified edge computing platform product, because this competence is often neither with the experts for controls nor in an IT department.

But this does not change anything in the basic model. Figure 2‑1 shows the potential architecture of a hypothetical automation platform.

A look into the future

An integrated edge-based architecture for controls in automation is not state of the art. The current architecture, in which most controllers are implemented in its application as a hardware PLC, is well established. However, if there is a need for more flexibility, the methods and technologies for integrating the virtualized controls in an edge computing architecture with edge nodes and edge data centers can offer great benefits.

There are challenges ahead for full PLC virtualization to become a reality. For example, there are fundamental differences between the deterministic nature of PLCs and the undeterministic, performance-focused nature of other traditional cloud services, e.g. office applications. Full PLC virtualization is unlikely to occur without one or more vendors getting involved in this technology shift. The vendors creating this reality would have greater market influence as the “VMWare of OT.”

Business considerations

Although using virtual PLCs in industrial control opens up an opportunity to integrate all the advancements in ICT technologies developed recently, the adoption rate in the manufacturing industry can be improved. We recommend vPLCs be deployed for greenfield deployment. To get the maximum benefit, they should be integrated with actuators and sensors on the production line, and make the controller-with-vPLCs be part of the Smart Factory supporting subsystems.

David Lou, Ph.D., chief researcher, Huawei Technologies; Ulrich Graf, senior engineer, Huawei Technologies; Mitch Tseng, Ph.D., managing member, TSENG InfoServ, LLC. This article originally appeared on the IIC JOI. The Industry IoT Consortium is a CFE Media content partner. Edited by Chris Vavra, web content manager, Control Engineering, CFE Media and Technology, [email protected].