Robotics, cars, and wheelchairs are among artificial intelligence beneficiaries, making control loops smarter, adaptive, and able to change behavior, hopefully for the better. University of Portsmouth researchers in the U.K. discuss how AI can help control engineering, in summary here. Below, see 7 AI-boosting breakthroughs, and online, see more examples, trends, explanations, and references in a 15-page article. Link to a 2013 article explaining how “Artificial intelligence tools can aid sensor systems.”

Key Concepts

- Artificial intelligence (AI) can help control engineering.

- Key AI applications include assembly, computer vision, robotics, sensing, computing.

- AI has a long way to go before it could be dangerous.

As has happened at many other times in our engineering careers, members of the research team at Portsmouth found themselves struggling yesterday with two common problems in control engineering. As usual, some inner control loops had to behave in a predictable and desired manner with strict timing, but the outer loops had to decide what those predictable inner loops were to do; they had to provide setpoints (or reference points) or reference profiles for the controllers in the inner loop to use as inputs. Can artificial intelligence (AI) be useful in this sort of control engineering problem?

– – –

Editor’s note: This online version greatly expands the summary print version, adding sections on Artificial intelligence tools; Analog vs. digital; unbounded vs. defined; Intelligent machines, so far; 12 key AI applications; 4 ways AI advances computer ability; Could AI turn against us?; Improving decision quality; More for AI before it is scary; Smarter, with benefits; Right tools for the right task; and Virtual world. Also see references and related links.

– – –

Inner control loops

Control engineering is all about getting a diverse range of dynamic systems (for example, mechanical systems) to do what you want them to do. That involves designing controllers. The inner loop problems we faced yesterday were about creating a suitable controller for a system or plant to be controlled. That is interfacing systems to sensors and actuators (in this case wheelchair motors and ultrasonic sensors for obstacle avoidance) and the problems could be solved using computer interrupts, timer circuits, additional microcontrollers, or simple open loop control.

Inner control loops are similar to autonomic nervous systems in animals. They tend to be control systems that act largely unconsciously to do things like regulate heart and respiratory rate, pupillary response, and other natural functions.

This system is also the primary mechanism in controlling the fight-or-flight responses first described by Walter Cannon (1929 & 1932); it primes an animal to fight or flee (Jansen et al., 1995; Schmidt & Thews, 1989). The autonomic nervous system has two branches: the sympathetic nervous system and the parasympathetic nervous system (Pocock, 2006). The sympathetic nervous system is a quick response mobilizing system, and the parasympathetic is a more slowly activated dampening system. In our case that was similar to quickly controlling the powered wheelchair motors and more slowly monitoring sensor systems for urgent reasons to quickly change a motor input.

Automatic control

When a device is designed to perform without the need of conscious human inputs for correction, it is called automatic control. Automatic control systems were first developed over 2,000 years ago, an example being the ancient Ktesibios’s water clock in Egypt (third century BC). Since then, many automatic control devices have been used over the centuries, older ones often being open-loop and more recent ones often being closed.

Examples of relatively early closed-loop automatic control devices that used sensor feedback in an inner loop include the temperature regulator of a furnace attributed to Drebbel in about 1620 and the centrifugal fly ball governor used for regulating the speed of steam engines by James Watt in 1788. Most control systems of that time used governor mechanisms, and Maxwell (1868) used differential equations to investigate the control systems dynamics for the systems. Routh (1874) and Hurwitz (1895) then investigated stability conditions for the systems. The idea was to use sensors to measure the output performance of a device being controlled so that those measurements could be used to provide feedback to input actuators that could make corrections toward some desired performance.

Feedback controllers

Feedback controllers began to be created as separate multipurpose devices, and Minorsky (1922) invented the three-term or PID control at the General Electric Research Laboratory while helping install and test some automatic steering on board a ship. PID controllers have regularly been used for inner loops ever since, and these feedback controllers were used to develop ideas about optimal control in the 1950s and ’60s. The maximum principle was developed in 1956 (Pontryagin et al., 1962), and dynamic programming (Bellman 1952 & 1957) laid the foundations of optimal control theory. That was followed by progress in stochastic and robust control techniques in the 1970s. The design methodologies of that time were for linear single input, single output systems and tended to be based on frequency response techniques or the Laplace transform solution of differential equations. The advent of computers and need to control ballistic objects for which physical models could be constructed led to the state-space approach, which tended to replace the general differential equation by a system of first order differential equations. That led to the development of modern systems and control theory with an emphasis on mathematical formulation.

Adaptive control

Recent controllers were traditionally electrical or at least electromechanical, but in 1969-1970, the Intel microprocessor was invented by Ted Hoff, and since then the price of microprocessors (and memory) has fallen roughly in line with Moore’s Law, which stated that the number of transistors on an integrated circuit would double roughly every two years. That all made the implementation of a basic feedback control system in an inner loop more trivial, and more recent systems have used robust control and then adaptive control. Adaptive control does not need a priori information and has parameters that vary, or are initially uncertain. For example, as an aircraft flies, its mass will slowly decrease as a result of fuel consumption, or in our case, a powered wheelchair user may become more tired as the day goes on. In these cases a low level control law is needed that adapts itself as conditions change.

Outer control loops

Inner control loops need inputs. They need reference points or profiles. These were originally just a set value. For example, the input to Ktesibios’s water clock was a desired level of water, and the input to Drebbel’s temperature regulator was a specific temperature value. As the inner loops became more reliable and could largely be left unattended (although often monitored), attention shifted to control loops that sit outside of them.

Where inner control loops are similar to autonomic nervous systems in animals, outer control loops are similar to their brains (Kandel, 2012). That is, they tend to be more conscious and less automatically predictable. Even flatworms have a simple and clearly defined nervous system with a central system and a peripheral system that includes a simple autonomic nervous system (Cleveland et al., 2008).

The brain is the higher control center for functions such as walking, talking, and swallowing. It controls our thinking functions, how we behave, and all our intellectual (cognitive) activities, such as how we attend to things, how we perceive and understand our world and its physical surroundings, how we learn and remember, and so on. In our case that was similar to deciding where a wheelchair user wanted to go and what the user might want to do, and whether or not control parameters should be adjusted because of them. In both cases, the more complicated higher level of control in the outer loops can only work if the lower level control in the inner loops acts in a reasonably predictable and repeatable fashion.

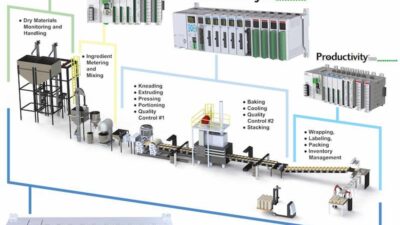

Originally, control engineering was all about continuous systems. Development of computers and microcontrollers led to discrete control system engineering because communications between the computer-based digital controllers and the physical systems were governed by clocks. Many control systems are now computer controlled and consist of both digital and analog components, and key to advancing their success is unsupervised and adaptive learning.

But a computer can do many things over and above controlling an outer loop to produce a desired input or inputs for some inner loops. Many people believe that the brain can be simulated by machines and because brains are intelligent, simulated brains must also be intelligent; thus machines can be intelligent. It may be technologically feasible to copy the brain directly into hardware and software, and that such a simulation will be essentially identical to the original (Russell & Norvig, 2003; Crevier, 1993).

Computer programs have plenty of speed and memory, but their abilities only correspond to the intellectual mechanisms that program designers understood well enough to put into them. Some abilities that children normally don’t develop until they are teenagers may be in, and some abilities possessed by two-year-olds are still out (Basic Questions, 2015). The matter is further complicated because cognitive sciences still have not succeeded in determining exactly what human abilities are. The organization of the intellectual mechanisms for intelligent control can be different from that in people. Whenever people do better than computers on some task, or computers use a lot of computation to do as well as people, this demonstrates that the program designers lack understanding of the intellectual mechanisms required to do the task efficiently. Or perhaps the task can be done better in a different way.

While control engineers have been migrating from traditional electromechanical and analog electronic control technologies to digital mechatronic control systems incorporating computerized analysis and decision-making algorithms, novel computer technologies have appeared on the horizon that may change things even more (Masi, 2007). Outer loops have become more complicated and less predictable as microcontrollers and computers have developed over the decades, and they have begun to be regarded as AI systems since John McCarthy coined the term in 1955 (Skillings, 2006).

Artificial intelligence tools

AI is the intelligence exhibited by machines or software. AI in control engineering is often not about simulating human intelligence. We can learn something about how to make machines solve problems by observing other people, but most work in intelligent control involves studying real problems in the world rather than studying people or animals.

AI can be technical and specialized, and is often deeply divided into subfields that often don’t communicate with each other at all (McCorduck, 2004) as different subfields focus on the solutions to specific problems. But general intelligence is still among the long-term goals (Kurzweil, 2005), and the central problems (or goals) of AI include reasoning, knowledge, planning, learning, communication, and perception. Currently popular approaches in control engineering to achieve them include statistical methods, computational intelligence, and traditional symbolic AI. The whole field is interdisciplinary and includes control engineers, computer scientists, mathematicians, psychologists, linguists, philosophers, and neuroscientists.

There are a large number of tools used in AI, including search and mathematical optimization, logic, methods based on probability, and many others. I reviewed an article about seven AI tools in Control Engineering that have proved to be useful with control and sensor systems (Sanders, 2013); they were knowledge-based systems, fuzzy logic, automatic knowledge acquisition, neural networks, genetic algorithms, case-based reasoning, and ambient-intelligence. Applications of these tools have become more widespread due to the power and affordability of present-day computers, and greater use may be made of hybrid tools that combine the strengths of two or more of them. Control engineering tools and methods tend to have less computational complexity than some other AI applications, and they can often be implemented with low-capability microcontrollers. The appropriate deployment of new AI tools will contribute to the creation of more capable control systems and applications. Other technological developments in AI that will impact on control engineering include data mining techniques, multi-agent systems, and distributed self-organizing systems.

AI assumes that at least some of something like human intelligence can be so precisely described that a machine can be made to simulate it. That raises philosophical issues about the nature of the mind and the ethics of creating AI endowed with some human-like intelligence, issues which have been addressed by myth, fiction, and philosophy since antiquity (McCorduck, 2004). But how do we do it? Well, mechanical or formal reasoning has been developed by philosophers and mathematicians since antiquity, and the study of logic led directly to the invention of the programmable digital electronic computer. Turing’s theory of computation suggested that a machine, by shuffling symbols as simple as “0” and “1”, could simulate any conceivable act of mathematical deduction (Berlinski, 2000).

This, along with discoveries in neurology, information theory, and cybernetics, inspired researchers to consider the possibility of building an electronic brain (McCorduck, 2004).

Computers can now win at checkers and chess, solve some word problems in algebra, prove logical theorems, and speak, but the difficulty of some of the problems that have been faced, surprised everyone in the community. In 1974, in response to criticism from Lighthill (1973) and ongoing pressure from the U.S. Congress, both the U.S. and British governments cut off all undirected exploratory research in AI, leaving only specific research in areas like control engineering.

Analog vs. digital; unbounded vs. defined

The brain (of a human or of a fruit fly) is an analog computer (Dyson, 2014). It is not a digital computer, and intelligence may not be any sort of algorithm. Is there any evidence for a programmable digital computer evolving the ability to take initiative or make choices which are not on a list of options programmed in by a human anyway? Is there any reason to think that a digital computer is a good model for what goes on in the brain? We are not digital machines. Turing machines are discrete state / discrete time machines while we are continuous state / continuous time organisms.

We have made advances with continuous models of neural systems as nonlinear dynamical systems, but in all these cases the present state of the system tends to determine the next state of the system, so that next state is entailed by the laws programmed into the computer. There is nothing for consciousness to do in a digital control system as the current state of the system suffices entirely for the next state.

In the coming decades, humanity may create a powerful AI but, in 1999, I suggested that machine intelligence was just around the corner (Sanders, 1999). It has all taken longer than I thought it would—and there has been frustration along the way (Sanders 2008)—but what is the story so far?

Intelligent machines, so far

It was probably the idea of making a “child machine” that could improve itself by reading and learning from experience that began the study of machine intelligence. That was first proposed in the 1940s, and after World War II, a number of people independently started to work on intelligent machines. Alan Turing was one of the first, and after his 1947 lecture, Turing predicted that there would be intelligent computers by the end of the century. Zadeh (1950) published a paper entitled “Thinking Machines-A New Field in Electrical Engineering,” and Turing (1950) discussed the conditions for considering a machine to be intelligent that same year. He made his now famous argument that if a machine could successfully pretend to be human to a knowledgeable observer then it should be considered intelligent.

Later that decade, a group of computer scientists gathered at Dartmouth College in New Hampshire (in 1956) to consider a brand-new topic: artificial intelligence. It was John McCarthy (now a professor at Stanford) who coined the name “artificial intelligence” just ahead of that meeting. That debate served as a springboard for further discussion about ways that machines could simulate aspects of human cognition. An underlying assumption in those early discussions was that learning (and other aspects of human intelligence) could be precisely described. McCarthy defined AI there as “the science and engineering of making intelligent machines” (McCarthy, 2007; Russell & Norvig, 2003). The attendees at Dartmouth, including John McCarthy, Marvin Minsky, Allen Newell and Herbert Simon, then became the leaders of AI research for decades.

By the late 1950s, there were many researchers in the area, and most of them were basing their work on programming computers. Minsky (head of the MIT Laboratory) predicted in 1967 that “within a generation the problem of creating ‘artificial intelligence’ will be substantially solved” (Dreyfus, 2008). Then, the field ran into unexpected difficulties around 1970 with the failure of any machine to understand even the most basic children’s story. Machine intelligence programs lacked the intuitive common sense of a four-year-old, and Dreyfus still believes that no one knows what to do about it.

Now (nearly 60 years after that first conference), we still have not managed to create a “child machine.” Programs still can’t learn much of what a child learns naturally from physical experience.

But, we do appear to be at a point in history when our human biology appears too frail, slow, and over-complicated in many industrial situations (Sanders, 2008). We are turning to powerful new control technologies to overcome those weaknesses, and the longer we use that technology, the more we are getting out of it. Our machines are exceeding human performance in more tasks. As they merge with us more intimately, and we combine our brain power with computer capacity to deliberate, analyze, deduce, communicate, and invent, then many scientists are predicting a period when the pace of technological change will be so fast and far-reaching that our lives will be irreversibly altered.

A fundamental problem, though, is that nobody appears to know what intelligence is. Varying kinds and degrees of intelligence occur in people, many animals, and now some machines. A problem is that we cannot agree what kinds of computation we want to call intelligent. Some people appear to think that human-level intelligence can be achieved by writing large numbers of programs of the kind that people are writing now or by assembling vast knowledge bases of facts in the languages now used for expressing knowledge. However, most AI researchers now appear to believe that new fundamental ideas are required, and therefore it cannot be predicted when human-level intelligence will be achieved (McCarthy, 2008).

12 key AI applications

Machine intelligence does combine a wide variety of advanced technologies to give machines an ability to learn, adapt, make decisions, and display new behaviors. This is achieved using technologies such as neural networks (Sanders et al., 1996), expert systems (Hudson, 1997), self-organizing maps (Burn, 2008), fuzzy logic (Dingle, 2011), and genetic algorithms (Manikas, 2007), and we have applied that machine intelligence technology to many areas. Twelve AI applications include:

- Assembly (Gupta et al., 2001; Schraft and Ledermann, 2003; Guru et al., 2004)

- Building modeling (Gegov, 2004; Wong, 2008)

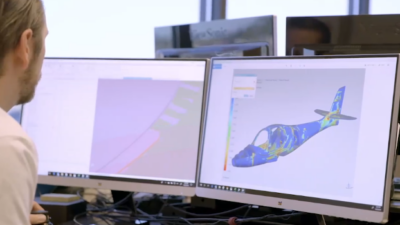

- Computer vision (Bertozzi, 2008; Bouganis, 2007)

- Environmental engineering (Sanders, 2000; Patra 2008)

- Human-computer interaction (Sanders, 2005; Zhao 2008)

- Internet use (Bergasa-Suso, 2005; Kress, 2008)

- Medical systems (Pransky, 2001; Cardso, 2007)

- Robotic manipulation (Tegin, 2005; Sreekumar, 2007)

- Robotic programming (Tewkesbury, 1999; Kim, 2008)

- Sensing (Sanders, 2007; Trivedi 2007)

- Walking robots (Capi et al., 2001; Urwin-Wright 2003)

- Wheelchair assistance (Stott, 2000; Pei, 2007).

4 ways AI advances computer ability

There appear to be some technologies that could significantly increase the practical ability of computers in these areas (Brackenbury, 2002; Sanders, 2008). Four ways AI helps computing.

- Natural language understanding to improve communication.

- Machine reasoning to provide inference, theorem-proving, cooperation, and relevant solutions.

- Knowledge representation for perception, path planning, modeling, and problem solving.

- Knowledge acquisition using sensors to learn automatically for navigation and problem solving.

Where are we going with machine intelligence? At one end of the spectrum of research there are handy robotic devices, such as iRobot’s Roomba vacuum cleaners and more personal robots such as the conversation character robots and Zeno robot-boy from Hanson Robotics, and Pleo, from Ugobe. These new “toy” robots could be a beginning for a new generation of ever-present, cheap robots with new capabilities. At another end of the spectrum, direct brain-computer interfaces and biological augmentation of the brain are being considered in research laboratories (along with ultra-high-resolution scans of the brain followed by computer emulation).

Some of these investigations are suggesting the possibility of smarter-than-human intelligence within some specific application areas. However, smarter minds are much harder to describe and discuss than faster brains or bigger brains, and what does “smarter-than-human” actually mean? We may not be smart enough to know (at least not yet).

Could AI turn against us?

Let’s address directly the problem of whether AI is going to destroy us all (Lanier, 2014). In the coming decades, humanity may create a powerful AI, but it has all taken longer than expected…and there has been frustration all along the way. By the middle of the 1960s, research in the U.S. was heavily funded by the Dept. of Defense and laboratories had been established around the world. Researchers were optimistic, and Herbert Simon predicted that “machines will be capable, within 20 years, of doing any work a [hu]man can do,” and Marvin Minsky agreed, writing that “Within a generation…the problem of creating ‘artificial intelligence’ will substantially be solved.” None of that happened, and a lot of the funding dried up.

Only in December 2014, an open letter calling for caution to ensure intelligent machines do not run beyond our control was signed by a large (and growing) number of people, including some of the leading figures in AI. Fears of our creations turning on us stretch back at least as far as Frankenstein, but as computers begin driving our cars (and powered wheelchairs), we may have to tackle these issues.

The letter from Stephen Hawking notes: “As capabilities in these areas and others cross the threshold from laboratory research to economically valuable technologies, a virtuous cycle takes hold whereby even small improvements in performance are worth large sums of money, prompting greater investments in research. There is now a broad consensus that AI research is progressing steadily, and that its impact on society is likely to increase. The potential benefits are huge, since everything that civilization has to offer is a product of human intelligence; we cannot predict what we might achieve when this intelligence is magnified by the tools AI may provide, but the eradication of disease and poverty are not unfathomable. Because of the great potential of AI, it is important to research how to reap its benefits while avoiding potential pitfalls.”

Other authors have added, “Our AI systems must do what we want them to do,” and they have set out research priorities they believe will help “maximize the societal benefit.” The primary concern is not spooky emergent consciousness, but simply the ability to make high-quality decisions that are aligned with our values (Lanier, 2014), something else that is difficult to pin down.

A system that is optimizing a function of variables, where the objective depends on a subset of them, will often set remaining unconstrained variables to extremes, for example 0 (Russel, 2014). But if one of those unconstrained variables is actually something we care about, any solution may be highly undesirable.

Improving decision quality

This is essentially the old story of the genie in the lamp; you get exactly what you ask for, not what you want. As higher level control systems become more capable decision makers and are connected through the Internet, then they could have an unexpected impact.

This is not a minor difficulty. Improving decision quality has been a goal of AI in control engineering. Research has been accelerating as pieces of the conceptual framework fall into place and the building blocks gain in number, size, and strength. Senior AI researchers are noticeably more optimistic than was the case even a few years ago, but there is a correspondingly greater concern about potential risks.

Instead of just creating pure intelligence, we need to be building useful intelligence. AI is a tool not a threat (Brooks, 2014). He says “relax; chill,” because it all comes from fundamental misunderstandings of the nature of the progress that is being made, and from a misunderstanding of how far we really are from having artificially intelligent beings. It is a mistake to be worrying about us developing malevolent AI anytime soon, and worry stems from not distinguishing between the real recent advances in control engineering, and the enormous complexity of building sentient AI.

Machine learning allows us to teach things like how to distinguish classes of inputs and to fit curves to time data. This lets our machines know when a powered wheelchair is about to collide with an obstacle. But that is only a very small part of the problem. The learning does not help a machine understand much about the human wheelchair driver or his intent or needs. Any malevolent AI would need these capabilities.

The intelligent powered wheelchair systems we are creating are unable to connect their understanding to the bigger world. They don’t know that humans exist (Brooks, 2014). If they are about to run into one, they make no distinction between a human and any other obstacle. The systems don’t even know that the powered wheelchair exists, although people train the systems, and the systems are there to serve us. But they know a little bit about the world, and the controllers have just a little common sense. For instance, they know that if they are driving the motors but are no longer moving for whatever reason, then there is no longer any point in continuing the motion. But they don’t have any semantic connection between an obstacle or person who is detected in their way, and the person who trained them.

There is some interesting work in cloud robotics, connecting the semantic knowledge learned by many robots into a common shared representation. This means that anything that is learned by one is quickly shared and becomes useful to all, but that can just make the machine learning problems bigger. It will actually be figuring out the equations and the problems in applications that will allow us to make useful steps forward in control engineering. It is not simply a matter of pouring more computation onto problems. Let’s get on with inventing better and more useful AI. It will take a long time, but there will be rewards for control engineering along the way.

There is a lot of bad stuff going on in the world, but little has to do with AI. There are so many human directed potential calamites, and we might be wiser to worry about things like terrorism and climate change (Smolin, 2014). If we are to survive as an industrial civilization and solve things like climate change, then there must be a synthesis of the natural and artificial control systems on the planet. To the extent that the feedback systems that control the carbon cycle on the planet have a rudimentary intelligence, this is where the merging of natural and AI could prove decisive for humanity.

More for AI before it is scary

AI may just be a fake thing (Myhrvold, 2014). It may just add an unnecessary philosophical layer to what otherwise should be a technical field. If we talk about particular technical challenges that AI researchers might be interested in, we end up with something duller but that makes a lot more sense. For instance, we can talk about fuzzy logic deciding between classifications in a control system. Can our programs recognize when a wheelchair user wants to go very close to a wall (for example to switch on a light) and that sort of thing? That sort of intelligent problem solving in control engineering is not leading to the creation of any sort of life, and certainly not life that would be superior to us. If you talk about AI as a set of techniques, as a field of study in mathematics or control engineering, it brings benefits. If we talk about it as a mythology, then we can waste time and effort.

It would be exciting if AI was working so well that it was about to get scary (Wastler, 2014), but the part that causes real problems to human beings and the world is the actuator as it is the interface to changing physicality. It is our control systems that will control it. Thinking about the problem as some sort of rogue autonomy algorithm, instead of thinking about the actuators, is misdirected. It is the actuators controlled by the control systems that can do real harm. Some AI mythology appears to be similar to some traditional ideas about religion, but applied to the technical world. The notion of a threshold (sometimes called a singularity or super-intelligence, etc.) is similar to a certain kind of superstitious idea about divinity (Lanier, 2014).

That said, as control engineers we may need to be more concerned about the two- or three-year-old child version of AI. Two- and three-year olds don’t understand when they are being destructive, but it will be difficult if their mistakes lead to more regulation. If our wheelchairs drive under a bus in an effort to avoid hitting a cat, then there will be interesting questions to answer, but regulation just sends work off-shore and hampers the “trusted” players, while hackers continue anyway.

Smarter, with benefits

Machines and control algorithms are getting smarter, and we are working hard to achieve that so that we can enjoy the real benefits, but what should these smart machines do and what should they not do? Should they decide who to kill on the battlefield or in the medical field? The Association for the Advancement of Artificial Intelligence has formally addressed these ethical issues in detail, with a series of panels, and plans are underway to expand the effort (Muehlhauser, 2014).

There are negative opinions, though. The philosopher John Searle says that the idea of a nonbiological machine being intelligent is incoherent; Hubert Dreyfus says that it is impossible. The computer scientist Joseph Weizenbaum says the idea is obscene, anti-human, and immoral. Various people have said that since AI hasn’t reached a human level by now, it may never reach it. Still other people are disappointed that companies they invested in went bankrupt (Basic Questions, 2015).

7 AI-boosting breakthroughs

Seven recent breakthroughs may boost AI developments:

- Cheap parallel computation could provide the equivalent of billions of neurons firing simultaneously.

- Big Data can help using categorization with the enormous amount of data that is stored on servers.

- Better algorithms, such as neural nets in stacked layers with optimized results within each layer, will allow learning to be accumulated faster.

- The increasing availability of vast computing power at low cost, and the advances in computer science and engineering, are influencing developments in control engineering.

- Recursive algorithmic solutions of control problems are now possible as opposed to the search for closed-form solutions (Kucera, 2014).

- Control systems are decision-making systems, and that is leading to interdisciplinary research and cross-fertilization. Emerging control areas include hybrid control systems (systems with continuous dynamics controlled by sequential machines), fuzzy logic control, parallel processing, neural networks, and learning. Control systems theory also benefits signal processing, communications, numerical analysis, transport, and economics.

- Analog computing is making a comeback, especially in control engineering. The idea that a machine can ultimately think as well or better than a human is a welcome one (Myhrvold, 2014), but our brain is an analog device, and if we are going to worry about AI, we may need analog computers and not digital ones. That said, a model is never reality, and if our models someday outdo the phenomena they’re modeling, we would have a one-off novelty.

The main thing that people appear to be afraid of is that AI may take over decisions that they think should be made by human beings, for example driving cars or powered wheelchairs and aiming and firing missiles. These can be life-and-death decisions, and they are engineering as well as ethical problems.

If an AI system makes a decision that we regret, then we change its algorithms. If AI systems make decisions that our society or our laws do not approve of, then we will modify the principles that govern them or create better ones. Of course, human engineers make mistakes and intelligent machines will make mistakes too, even big mistakes. Like humans, we need to keep monitoring, teaching, and correcting them. There will be a lot of scrutiny on the actions of artificial systems, but a wider problem is that we do not have a consensus on what is appropriate.

Right tools for the right task

There is a distinction to be made between machine intelligence and machine decision making. We should not be afraid of intelligent machines but of machines making decisions that they do not have the intelligence to make. As for human beings, it is machine stupidity that is dangerous and not machine intelligence (Bishop, 2014). A problem is that intelligent control algorithms can make very many good decisions and then one day make a very foolish decision and fail spectacularly because of some event that never appeared in the training data. That is the problem with bounded intelligence. We should fear our own stupidity far more than the hypothetical brilliance or stupidity of algorithms yet to be invented. AI machines have no emotions and never will, because they are not subject to the forces of natural selection (Ingham & Mollard, 2014).

There is no metric for intelligence or benchmark for particular kinds of learning and smartness, and so it is difficult to know if we are improving (Kelly, 2014). We definitely do not have any ruler to measure the continuum of intelligence. We don’t even have an agreed definition of intelligence. AI does appear to be becoming more useful in control engineering though, partly through trial and error and removing control algorithms and machines that do not work. As AI systems make mistakes, we can decide what is acceptable. Since AI systems are assuming some tasks that humans do, we have much to teach them. Without this teaching and guidance they would be frightening (as can many engineering systems), but as control engineers, we can provide that teaching and guidance.

Virtual world

As humans, we know the physical world only through a neurologically generated virtual model that we consider to be reality. Even our life history and memory is a neurological construct. Our brains generate the narratives that we live by. These narratives are imprecise, but good enough for us to blunder along. Although we may be bested on specific tasks, overall, we tend to fare well in competition against machines. Machines are very far from simulating our flexibility, cunning, deception, anger, fear, revenge, aggression, and teamwork (Brockman, 2014).

While respecting the narrow, chess-playing prowess of Deep Blue, we should not be intimidated by it. In fact, intelligent machines have helped human beings to become better chess players. As AI develops, we might have to engineer ways to prevent consciousness in them just as we engineer control systems to be safe. After all, even with Watson or Deep Blue, anyone can pull its plug and beat it into rubble with a hammer (Provine, 2014)…or can we?

– David A. Sanders (Engineering) and Alexander Gegov (Computer Science), University of Portsmouth, UK; Edited by Mark T. Hoske, content manager, Control Engineering, [email protected].

ONLINE

Consider this

More frequent control software upgrades may be more advantageous as programs use more useful AI principles.

ONLINE extras

This 15-page (approximate paper equivalent) online article is summarized in the Control Engineering February 2015 issue.

See below for related links and reference, including a December 2013 article by Sanders, “Artificial intelligence tools can aid sensor systems.”

Association for the Advancement of Artificial Intelligence

Artificial intelligence tools can aid sensor systems

At least seven artificial intelligence (AI) tools can be useful when applied to sensor systems: knowledge-based systems, fuzzy logic, automatic knowledge acquisition, neural networks, genetic algorithms, case-based reasoning, and ambient-intelligence. See diagrams.

Artificial intelligence: Fuzzy logic explained

Fuzzy logic for most of us: It’s not as fuzzy as you might think and has been working quietly behind the scenes for years. Fuzzy logic is a rule-based system that can rely on the practical experience of an operator, particularly useful to capture experienced operator knowledge. Here’s what you need to know.

References

Bar-Cohen Y (2003). Actuation of biologically inspired intelligent robotics using artificial muscles. Industrial Robot: An International Journal. Vol. 30 (4), pp 331-337.

Basic Questions (2015). Basic Questions-What Is Artificial intelligence? Stanford University. https://www-formal.stanford.edu/jmc/whatisai/node1.html. Accessed 15 January 2015.

Bellman R (1952). On the Theory of Dynamic Programming, Proceedings of the National Academy of Sciences. Bellman RE (1957). Dynamic Programming. Princeton University Press, Princeton, NJ, USA. Republished 2003: Dover, ISBN 0-486-42809-5.

Bergasa-Suso J, Sanders DA, Tewkesbury GE (2005). Intelligent browser-based systems to assist Internet users. IEEE Transactions 48 (4), pp 580-585.

Berlinski D (2000). The Advent of the Algorithm. Harcourt Books. ISBN 0-15-601391-6.

Bertozzi M, Bombini L, Broggi A, Cerri P, Grisleri P, Medici P, Zani P (2008). GOLD: A framework for developing intelligent-vehicle vision applications. IEEE Intelligent Systems. Vol. 23 (1), pp 69-71.

Bishop M (2014). Fear artificial stupidity, not artificial intelligence. New Scientist. https://www.newscientist.com/article/dn26716-fear-artificial-stupidity-not-artificial-intelligence.html#.VLlxA6P4vK0. Accessed 15 January 2015.

Bouganis A, and Shanahan M (2007). A vision-based intelligent system for packing 2-D irregular shapes. IEEE Trans on Automation Science & Engineering. Vol. 4 (3), pp 382-394.

Brackenbury I, Ravin Y (2002). Machine intelligence and the Turing Test. IBM Systems Journal. Vol. 41 (3), pp 524-529.

Brockman J (2014). The Myth of AI-A Conversation with Jaron Lanier. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Brooks R (2014). Artificial intelligence is a tool, not a threat (https://www.rethinkrobotics.com/artificial-intelligence-tool-threat) in rethinking robotics https://www.rethinkrobotics.com/category/rethinking-robotics accessed 14 January 2015.

Cannon W (1932). Wisdom of the Body. United States: W.W. Norton & Company. ISBN 0393002055. Cannon WB (1929). Bodily changes in pain, hunger, fear, and rage. New York: Appleton-Century-Crofts.

Capi G, Nasu Y, Barolli L, Mitobe K, Yamano M (2001). Real time generation of humanoid robot optimal gait for going upstairs using intelligent algorithms. Industrial Robot: An International Journal. Vol. 28 Issue 6; pp 489-497.

Cardso JS and Cardos MJ (2007). Towards an intelligent medical system for the aesthetic evaluation of breast cancer conservative treatment . AI in Medicine. Vol. 40 (2), pp 115-126.

Cleveland HP Jr, Roberts LS, Keen SL, Larson A, L’Anson H, Eisenhour DJ (2008). Integrated Principles of Zoology: Fourteenth Edition. New York, NY, USA: McGraw-Hill Higher Education. p. 733. ISBN 978-0-07-297004-3.

Crevier D (1993). AI: The Tumultuous Search for artificial intelligence, New York, NY, USA: BasicBooks, ISBN 0-465-02997-3.

Dingle N (2011). artificial intelligence: Fuzzy Logic Explained. Control Engineering. https://www.controleng.com/single-article/artificial-intelligence-fuzzy-logic-explained/8f3478c13384a2771ddb7e93a2b6243d.html. Accessed 15 January 2015.

Dreyfus HL and Dreyfus SE (2008). From Socrates to Expert Systems: The Limits and Dangers of Calculative Rationality. WWW Pages of the Graduate School at Berkeley (accessed 15 January 2015). https://garnet.berkeley.edu/~hdreyfus/html/paper_socrates.html

Dyson G (2014). AI Brains Will Be Analog Computers, of Course. Space Hippo.

https://space-hippo.net/ai-brains-analog-computers. Accessed 15 January 2015.

Feigenbaum E (1990). Knowledge Processing-From File Servers to Knowledge Servers. The Age of Intelligent Machines, MIT Press.

Gegov A (2004). Application of computational intelligence methods for intelligent modelling of buildings. Applications and science in soft computing. ISSN: 1615-3871 (Advances in soft computing ISBN: 3-540-40856-8 Editors: Loffi A, Garobaldi J), Springer-Verlag, Berlin, pp 263-270.

Gupta SK, Paredis CJJ, Sinha R, Brown PF (2001). Intelligent assembly modelling and simulation, Assembly Automation. ISSN: 0144-5154, Vol. 21 Issue 3, pp 215-235.

Guru SM, Fernando S, Halgamuge S, and Chan K (2004). Intelligent fastening with A-BOLT technology and sensor networks. Assembly Automation. ISSN: 0144-5154. Vol. 24 (4), pp 386-393.

Hudson AD, Sanders DA, Golding H, et al. (1997). Aspects of an expert design system for the wastewater treatment industry. Journal of Systems Architecture 43 (1-5): 59-65.

Hurwitz (1895). “Ueber die Bedingungen, unter welchen eine Gleichung nur Wurzeln mit negativen reellen Teilen besitzt.” Mathematische Annalen Nr. 46, Leipzig: 273-284.

Ingham R and Mollard P (2014). Artificial intelligence: Hawking’s fears stir debate. https://phys.org/news/2014-12-artificial-intelligence-hawking-debate.html. Accessed 15 January 2015.

Jansen A, Nguyen X, Karpitsky V, Mettenleiter M (27 October 1995). “Central Command Neurons of the Sympathetic Nervous System: Basis of the Fight-or-Flight Response”. Science Magazine 5236 (270).

Kandel ER (2012). Principles of neural science (ed: Kandel ER, Schwartz JH and Jessell TM). Appleton and Lange: McGraw Hill, pp 338-343. ISBN 978-0-07-139011-8.

Kelly K (2014). Untitled. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Kim J, Choi M, Kim M, Kim S, Kim M, Park S, Lee J, Kim B (2008). Intelligent robot software architecture. 13th International Conference on Advanced Robotics. Published in lecture notes in control and information sciences, Vol. 370, pp 385-397.

Kress M (2008). Intelligent Internet knowledge networks: Processing of concepts and wisdom. Information processing & management. Vol. 44 (2), pp 983-984.

Kucera V (1997). Control Theory and Forty Years of IFAC: A Personal View. IFAC Newsletter Special Issue: 40th Anniversary of IFAC, Paper 5. https://web.dit.upm.es/~jpuente/ifac/newsletter40a/kucera.html. Accessed 15 January 2015.

Kurzweil R (2005). The Singularity is Near. Penguin Books. ISBN 0-670-03384-7.

Lanier J (2014). The Myth Of AI-A Conversation with Jaron Lanier. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Legg S, Hutter, M (2007). Universal intelligence: A definition of machine intelligence. Minds and Machines. Vol. 17, pp 391-444.

Lighthill, James (1973). “Artificial Intelligence: A General Survey.” Artificial Intelligence: A Paper Symposium. Science Research Council.

Masi CG (2007). Fuzzy Neural Control Systems-Explained. Control Engineering. https://www.controleng.com/single-article/fuzzy-neural-control-systems-explained/5c059c54afe9fbc0b7428ac58af007b6.html. Accessed 15 January 2015.

Maxwell (1868). “On Governors”: Exposition on Feedback Mechanisms. From the Proceedings of the Royal Society, No. 100.

McCarthy J (2008). “What Is artificial intelligence?” Computer Science Department WWW Pages at Stanford University (retrieved 19 June 2008). https://www-formal.stanford.edu/jmc/whatisai/whatisai.html. Retrieved 14 January 2015.

McCorduck P (2004). Machines Who Think: A Personal Inquiry into the History and Prospects of Artificial Intelligence. New York, AK Peters. ISBN-10: 1568812051

Minorsky N (1922). “Directional stability of automatically steered bodies.” Journal of the American Society of Naval Engineers. 34: 280-309. doi:10.1111/j.1559-3584.1922.tb04958.x

Muehlhauser M (2014). Three misconceptions in Edge.org’s conversation on “The Myth of AI.” Machine Intelligence Research Institute. https://intelligence.org/2014/11/18/misconceptions-edge-orgs-conversation-myth-ai. Accessed 15 January 2015.

Myhrvold N (2014). Untitled. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Patra JC, Juhola M, Meher PK (2008). Intelligent sensors using computationally efficient Chebyshev neural networks. IET Science measurement & technology. Vol. 2 (2), pp 68-75.

Pei J, Huosheng H, Tao L and Kui Y (2007). Head gesture recognition for hands-free control of an intelligent wheelchair. Industrial Robot: An International Journal. Vol. 34 (1), pp 60-68.

Pocock G (2006). Human Physiology (3rd ed.). Oxford University Press. pp 63-64. ISBN 978-0-19-856878-0.

Pontryagin LS, Boltyanskii VG, Gamkrelidze RV, Mishchenko EF (1962). The Mathematical Theory of Optimal Processes. English translation. Interscience. ISBN 2-88124-077-1.

Pransky J (2001). An intelligent operating room of the future—An interview with the University of California Los Angeles Medical Center. Industrial Robot: An International Journal. Vol. 28 Issue 5, pp 376-382.

Provine R (2014). Untitled. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Routh EJ (1874). Treatise on the Stability of a Given State of Motion, Particularly, Steady Motion, MacMillan, London, included within Routh, EJ (1877). Treatise on the Stability of a Given State of Motion. MacMillan. Reprinted in ‘Stability of Motion’ (ed. AT Fuller) London 1975 (Taylor & Francis).

Russel S (2014). Of Myths and Moonshine. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Russell & Norvig (2003). Artificial Intelligence: A Modern Approach. Pearson Education. ISBN: 0137903952.

Sanders D (1999). Perception in robotics. Industrial Robot: An International Journal. Vol. 26 (2), pp 90-91.

Sanders D (2007). Force sensing. Industrial Robot: An International Journal. Vol. 34 (3), p 177. ISSN 0143-991X 10.1108/01439910738791.

Sanders D (2008). Progress in machine intelligence. Industrial Robot: An International Journal. Vol. 35 (6), pp 485-487. ISSN 0143-991X.

Sanders D (2013). Artificial intelligence tools can aid sensor systems. Control Engineering, Dec 13, pp 44-48. ISSN 0010-8049.

Sanders DA and Hudson AD (2000). A specific blackboard expert system to simulate and automate the design of high recirculation airlift reactors. Mathematics & Computers in simulation 53 (1-2), pp 41-65.

Sanders DA, Urwin-Wright SD, Tewkesbury GE, et al. (2005). Pointer device for thin-film transistor and cathode ray tube computer screens. Electronics Letters 41 (16), pp 894-896.

Sanders DA, Haynes BP, Tewkesbury GE, et al. (1996). The addition of neural networks to the inner feedback path in order to improve on the use of pre-trained feed forward estimators. Mathematics and computers in simulation 41 (5-6), pp 461-472.

Schmidt A and Thews G (1989). “Autonomic Nervous System.” In Janig, W. Human Physiology (2 ed.). New York, NY: Springer-Verlag. pp 333-370.

Schraft RD and Ledermann T (2003). Intelligent picking of chaotically stored objects. Assembly Automation, ISSN: 0144-5154. Vol. 23 (1), pp 38-42.

Searle JR (1990). Consciousness, Explanatory Inversion, and Cognitive Science. The Behavioral and Brain Sciences 13 (4), (December), pp 585-696.

Skillings J (2006). “Getting Machines to Think Like Us.” https://news.i.com.com. Retrieved 10 March 2012.

Smolin L (2014). Untitled. Edge. https://edge.org/conversation/the-myth-of-ai. Accessed 15 January 2015.

Sreekumar M, Nagarajan T, Singaperumal M, Zoppi M, and Molfino R (2007). Critical review of current trends in shape memory alloy actuators for intelligent robots. Industrial Robot: An International Journal. Vol. 34 (4), pp 285-294.

Stott I, Sanders D (2000). New powered wheelchair systems for the rehabilitation of some severely disabled users. International Journal of Rehabilitation Research. Vol. 23 (3), pp 149-153.

Tegin J and Wikander J (2005). Tactile sensing in intelligent robotic manipulation-A review. Industrial Robot: An International Journal. Vol. 32 (1), pp 64-70.

Tewkesbury G, Sanders D (1999). A new robot command library which includes simulation. Industrial Robot: An International Journal. Vol. 26 (1), pp 39-48.

Trivedi MM, Cheng Y (2007). Holistic sensing and active displays for intelligent driver support systems. Computer. Vol. 40 (5), pp 60-62.

Turing A (1950). Computing machinery and intelligence. Mind, 1950.

Urwin-Wright S, Sanders D, Chen S (2003). Predicting terrain contours using a feed-forward neural network. Engineering Applications of Artificial Intelligence. Vol. 16 (5-6), pp 465-472.

Wang, Y (2007). Keynote address. Proc’ 6th IEEE International Conference on Cognitive Informatics, CA, pp 3-12.

Wastler A (2014). Elon Musk, Stephen Hawking and fearing the machine. CBN technology. https://www.cnbc.com/id/101774267#. Accessed 15 January 2015.

Wong J, Li H, Lai J (2008). Evaluating the system intelligence of the intelligent building systems. Automation in Construction. Vol. 17 (3), pp 303-321.

Zadeh LA. (1950). Thinking machines. A new field in electrical engineering. Columbia Engineering Quarterly 3: pp 12-13 and pp 30-31.

Zhao M, Nowatzyk AG, Lu T, Farkas DL (2008). Intelligent non-contact surgeon-computer interface using hand gesture recognition. Three dimensional image capture and applications 2008, Proc of SPIE-IS&T Electronic Imaging. Vol. 6805, pp U8050-U8050.