Cover Story: Can artificial intelligence (AI) prove to be the next evolution of control systems? See three AI controller characteristics and three applications.

Learning Objectives

- Characteristics of AI-based controllers include learning, delayed gratification and non-traditional input data.

- The AI controller needs its brain trained.

- AI controller use cases include energy optimization, quality control and chemical processing.

Control systems have continuously evolved over decades, and artificial intelligence (AI) technologies are helping advance the next generation of some control systems.

The proportional-integral-derivative (PID) controller can be interpreted as a layering of capabilities: the proportional term points toward the signal, the integral term homes in on the setpoint and the derivative term can minimize overshoot.

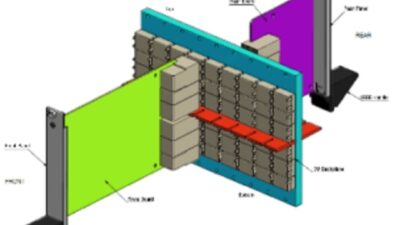

Although the controls ecosystem may present a complex web of interrelated technologies, it can also be simplified by viewing it as ever-evolving branches of a family tree. Each control system technology offers its own characteristics not available in prior technologies. For example, feed forward improves PID control by predicting controller output, and then uses the predictions to separate disturbance errors from noise occurrences. Model predictive control (MPC) adds further capabilities to this by layering predictions of future control action results and controlling multiple correlated inputs and outputs. The latest evolution of control strategies is the adoption of AI technologies to develop industrial controls. One of the latest advancements in this area is the application of reinforcement learning-based controls, as Figure 1 shows.

Three characteristics of AI-based controllers

AI-based controllers (that is, deep reinforcement learning-based, or DRL-based, controllers) offer unique and appealing characteristics, such as:

- Learning: DRL-based controllers learn by methodically and continuously practicing – what we know as machine teaching. Hence, these controllers can discover nuances and exceptions that are not easily captured in expert systems, and may be difficult to control when using fixed-gain controllers. The DRL engine can be exposed to a wide variety of process states by the simulator. Many of these states would never be encountered in the real world, as the AI engine (brain) may try to operate the plant much too close or beyond to the operational limits of a physical facility. In this case, these excursions (which would likely cause a process trip) are experiences for the brain to learn what behaviors to avoid. When this is done often enough, the brain learns what not to do. In addition, the DRL engine can learn from many simulations all at once. Instead of feeding the brain data from one plant, it can learn from hundreds of simulations, each proceeding faster than what is seen in normal real-time to provide the training experience conducive for optimal learning.

- Delayed gratification: DRL-based controllers can learn to recognize sub-optimal behavior in the short term, which enables the optimization of gains in the long term. According to Sigmund Freud, and even Aristotle back in 300 B.C., humans know this behavior as “delayed gratification.” When AI behaves this way, it can push past tricky local minima to more optimal solutions.

- Non-traditional input data: DRL-based controllers manage the intake and are able to evaluate sensor information that automated systems cannot. As an example, an AI-based controller can consider visual information about product quality or an equipment’s status. It also takes into consideration categorical machine alerts and warnings when taking control actions. AI-based controllers can even use sound signals and vibration sensor inputs to determine how to make process decisions, similar to sounds human operators are subject to. The ability to process visual information, such as the size of a flare, differentiates and reveals DRL-based controllers’ capabilities.

Enabling DRL-based control systems

Four steps are involved in delivering a DRL-based controls to a process facility:

- Preparation of a companion simulation model for the brain

- Design and training of the brain

- Assessment of the trained brain

- Deployment.

Companion simulation model

Enabling DRL-based controllers requires a simulation or “digital twin” environment to practice and learn how decisions are made. The advantage of this method is the brain can learn both what is considered “good” as well as what is “bad” for the system, to achieve stated goals. Given that the real environment has variabilities – far more than what are usually represented – within a process simulation model and the amount of simulation required to train the brain over the state space of operation, reduced order models that maintain fundamental principles of physics offer the best method of training the brain. These models offer a way to develop complex process simulations and are faster during run-time, both of which allow a more efficient way to develop the brain. Tag-based process simulators are known for a simple design, ease of use and ability to adapt to a wide range of simulation needs, which fit the requirements of a simulation model required to train DRL-based brains.

In this modern age, when panels of lights and switches have been relegated to the back corner of the staging floor, tag-based simulators have become much more significant in making the job of an automation engineer less cumbersome. Using simulation to test a system on a factory acceptance test (FAT) prior to going to the field has been the “bread and butter” of process simulation software for decades – well before the advent of modern lingo, like “digital twin.” The same simulators can be used in training AI engines to effectively control industrial processes. To achieve this, simulators need to be able to run in a distributed fashion across multiple CPUs and potentially in the “cloud.” Multiple instances of the simulations are needed to either exercise, train or assess potential new AI algorithms in parallel execution. Once this is achieved, operator trainer systems that have been developed using tag-based simulators can be used for training DRL-based AI engines.

Design and training of the brain

Designing the brain based on the process targeted to be controlled is crucial in developing a successful DRL-based, optimal control solution. The brain can consist of not only AI concepts, but can also include heuristic, programmed logic, and well-known rules. When the information from a subject matter expert (SME) is properly gleaned, the ability to implement a brain using that information is key to the success of a project.

Figure 3: Wood VP Link for Microsoft Bonsai software helps users define state and action spaces with a tag-based simulator. Courtesy: Wood, Microsoft[/caption]

The decisions about the size of the process state and action space boil down to which simulation tags should be included in each of the state and action structures. Figure 3 presents an example of a tag-based simulator where states and actions are defined. Selecting the tags from a list and clicking a button can add them to the state or action structure used by the brain.

Defining state and action spaces

Inkling is a language developed for use in training DRL agents to express the training paradigm in a compact, expressive and easy-to-understand syntax. Tag-based simulators can be programmed to automatically generate the Inkling code defining the state and action structures for the brain (shown in Figure 4).

Figure 5: This is user-created code needed to create an artificial intelligence (AI) brain. Courtesy: Wood, Microsoft[/caption]

Choosing the appropriate lessons and scenarios matched to a goal are the results of proper collaboration between the brain designer and the SME without overflowing the tank. The “lesson” and “scenario” statements tell the brain how to learn that goal. In this case, the scenario directs the brain to start each training episode with a random, yet constrained level and setpoint.

Creating code to create an AI brain

Effective training of the brain requires a very large state-space of operation to be explored. Cloud technologies allow for simulators to be containerized and run in a massively parallel environment. However, when desiring successful results, testing ideas to train the brain need to be run through the simulator locally first to “iron out” the bugs. Once the user is satisfied, the simulator can be containerized and run in the cloud. Typical brain training sessions can be anywhere between 300,000 and 1,000,000 training iterations. Figure 6 shows the training progress of a brain with a simple tank demo. Cloud resources can manage to train a simulator requiring half of a million iterations in less than one hour.

In Figure 7, the progress of brain training as a function of the number of iterations is shown. The “Goal Satisfaction” parameter is a moving average of training episodes, resulting in the total number of goals being met. Typically, one requires a goal satisfaction value that reaches 100% to achieve effective control from the brain for all scenarios it has practiced on.

Assessment of trained brain

After a brain is trained, it needs to be tested to assess its viability. During this phase, the brain is run against the model to judge its behavior. However, this time the scenarios should be varied in the simulation – performing tests on the brain with situations it may not have encountered during the original rounds of testing.

For instance, if a value is controlled by a combination of three valves, what happens if one valve is now unavailable? Can the brain do something reasonable if one of the valves is stuck, or out for maintenance? This is where simulator models developed for operator trainer systems or control system testing can be adapted. As one would do with control system testing, the AI controller needs to be put through a rigorous formal testing procedure. A simulator with an automated test plan can significantly reduce the effort required to assess the “trained” brain.

Deployment of the brain

Once the brain has passed the assessment test, it can be deployed. While there are many modes of deployment, the unique advantage of using tag-based simulators used for testing control systems is they can be used as the middleware to integrate the brain with the control system. With a large assortment of available drivers for various control systems, integration into a customer-specific site is much easier than using a custom solution. Additionally, from a software maintenance perspective, minimizing the number of custom deployments is always appreciated.

Artificial intelligence use cases

DRL-based brains have been designed for over 100 use cases and have been deployed spanning a wide variety of industries and vertical markets.

Several use cases and corresponding unique, challenges or applications are presented to illustrate the power of DRL-based brains.

Building HVAC energy optimization

Optimizing energy use in building while ensuring CO2 level status below legal limits requires engineers to establish chiller plant setpoints that minimize cost while keeping indoor temperature within narrow ranges. A level of complexity is added to this due to changing ambient temperature through the day, as well as seasonal variation in daily ranges. DRL-based controls take into account real-time weather data (such as ambient temperature and humidity) and use machine learning in conjunction with models of past relationships of performance and ambient conditions to deliver a more optimized solution.

Counterintuitive to what many humans might assume, the AI-recommended setpoints at the cooling towers and chillers are increased, while also increasing the speed of the pump(s) delivering water, to achieve the overall objective of energy reduction, while maintaining the desired temperature. As the world turns towards more sustainable solutions, DRL-based solutions can deliver more optimal energy efficiency to meet sustainability goals of corporations.

Food manufacturing quality control

Maximizing product quality and minimizing off-spec product is a common goal for many manufacturers. In this case, the expertise of human operators is needed to run equipment at proper conditions.

The AI-based solution and integrated on-line video analysis, along with traditional process inputs, enable the creation of a brain that controls multiple outputs simultaneously while respecting the limits of the processing equipment. The result is a faster response to process deviations and less off-spec products.

Chemical processing control

Polymer production requires close control of setpoints on the reactors. Typical challenges involved in polymer production includes transient control, especially during change from one grade of polymer to another when chance of off spec production is high. The challenge here is also to control optimally and deliver consistent outcomes. The number of control variables is large, and many times human experience is used in the process of control, and as this is a learned skill, variability in outcomes results due to variability in operator experience and subjectiveness involved. Deploying a trained brain that has explored all state spaces associated with the transient operation and incorporates the wisdom of the most experienced and best performing operators allows for faster and more consistent operational advice to the operator and minimizes the amount of off spec product.

The use cases above show a few applications, but AI technology use can extend to any complex problem that can be modeled using simulations. The types of problems where the technology can be applied are shown in Figure 8. A few other industry problems that fall into the categories listed are:

- Gas lift optimization in upstream oil and gas sector

- Controlling intermittent production upsets in topsides equipment in upstream oil and gas sector

- Refinery/chemical plant performance optimization and control

- Optimizing and controlling start-up sequence in chemical plants

- Logistics and supply chain optimization in discrete manufacturing sector

- Alarms rationalization.

AI for next-generation advanced control

AI-based, machine learning control systems show promise as the next evolution of advanced control, particularly for complex systems with large state spaces, partially in regards to measured state and non-linear correlations between variables. However, a few key pieces of technology are required to realize this promise.

Beyond the hype of a “digital twin,” learning agents must have access to a directionally accurate simulation model to practice on and means of deploying a learning agent to make decisions in the plant. End-to-end processes of building and AI learning agents for advanced process controls are: training the agent in simulation, teaching the agent across multiple optimization goals and scenarios, assessing the agent, and finally deploying the agent on the edge as a production control system.

Kence Anderson is the principal program manager, Autonomous Systems, with Microsoft. Winston Jenks is a technical director for Applied Intelligence with Wood, and Prabu Parthasarathy, PhD is the vice president of Applied Intelligence with Wood, a system integrator and CFE Media and Technology content partner. Edited by Mark T. Hoske, content manager, Control Engineering, CFE Media and Technology, [email protected].

MORE ANSWERS

KEYWORDS: Artificial intelligence, control systems, VP Link, Bonsai, simulation, reinforcement learning

CONSIDER THIS

Will you be giving your next control system the tools it needs to learn how to serve its applications better?