University of Missouri researchers received a $600,000 grant from the National Science Foundation to explore cloud and edge computing's future.

Over the past decade or so, computing has moved into multiple clouds, allowing companies to broaden their storage capacity and users to retrieve information from any device with an internet connection.

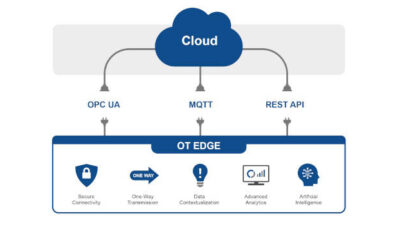

Not everything needs the large-scale hardware and equipment required to maintain a cloud, though. That’s where edge, or local, information technology architecture comes in. Edge computing processes data close to the originating source and provides an abundance of low-cost computational resources.

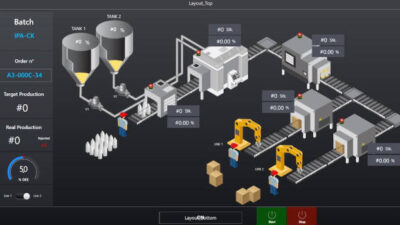

Now, Prasad Calyam at the University of Missouri is exploring how cloud and edge systems can work together, ensuring that information can be intelligently and securely transferred from one trustworthy platform to another in order to complete data-intensive workflows of scientific applications such as bioinformatics and manufacturing.

Calyam — who is Greg L. Gilliom Professor of Cyber Security in the Department of Electrical Engineering and Computer Science — has recently received a $600,000 grant from the National Science Foundation for the work.

“The goal is to develop a volunteer edge-cloud architecture and framework to support trusted resource allocation for data-intensive workflows,” said Calyam, who is also director of the Mizzou Center for Cyber Education, Research and Infrastructure.

Specifically, his work builds on latest advances of Kubernetes, an open-source container system that orchestrates and automates software deployment and management across platforms.

“Kubernetes enables us to think about how to get cloud and edge to work together,” Calyam said. “In this project, what we’re trying to do is leverage a lightweight Kubernetes architecture to create containers of smaller pieces of programs that can be distributed between resource-constrained edge nodes and scalable cloud node resources.”

In other words, these containers can help intelligently orchestrate lightweight information processing at volunteer resources in local data centers and help with moving heavyweight information processing to a larger cloud environment.

In the new and emerging volunteer edge-cloud computing paradigm, collaborators in a community with various backgrounds contribute their resources to form a distributed infrastructure to execute scientific workflows.

Calyam will first consider what could go wrong when trying to share multiple cloud and volunteer edge resources and how to contain those threats. Then, his team will consider policies and guidelines to ensure dynamic selection of only those volunteer edge resources that are trustworthy, scalable and reliable.

The research is critical at a time when applications are being increasingly optimized to function with high-performance or high-security by relying on machine learning models that involve training of large amounts of data in the cloud using heavyweight resources, and using edge resources for lightweight inference, he said.

“The key challenge in this project is to increase the wide adoption of volunteer cloud-edge computing,” Calyam said. “The paradigm of volunteer cloud-edge computing provides many additional benefits and controls for improving how we handle workflows of scientific applications today. We are developing algorithms to automatically prescribe resource management policies that are usable and relevant, especially when a large number of volunteer edge resources are being leveraged that have intermittent performance and availability.”

– Edited by Chris Vavra, web content manager, Control Engineering, CFE Media and Technology, [email protected].